Last updated on June 3, 2026 pm

本文为 SJTU-CS3612 机器学习课程的笔记整理 ,主要聚焦于公式及其推导过程。

Lecture 1: Linear Model

1.1 Linear regression

假设有 n n n { x i 1 , x i 2 , … , x i p , y i } i = 1 n \{x_{i1}, x_{i2}, \dots, x_{ip}, y_i\}_{i=1}^n { x i 1 , x i 2 , … , x i p , y i } i = 1 n

y i = β 0 1 + β 1 x i 1 + β 2 x i 2 + ⋯ + β p x i p + ε i = ∑ j = 0 p x i j β j + ε i y_i = \beta_0 1+\beta_1 x_{i 1}+\beta_2 x_{i 2} +\cdots+\beta_p x_{i p}+\varepsilon_i =\sum_{j=0}^p x_{i j} \beta_j+\varepsilon_i

y i = β 0 1 + β 1 x i 1 + β 2 x i 2 + ⋯ + β p x i p + ε i = j = 0 ∑ p x ij β j + ε i

或者表示为矩阵形式:

y = X ⊤ β + ε \mathbf{y}=\mathbf{X}^{\top} \boldsymbol{\beta}+\boldsymbol{\varepsilon}

y = X ⊤ β + ε

其中

X ⊤ = [ x 1 ⊤ x 2 ⊤ ⋮ x n ⊤ ] = [ 1 x 11 ⋯ x 1 p 1 x 21 ⋯ x 2 p ⋮ ⋮ ⋱ ⋮ 1 x n 1 ⋯ x n p ] , y = [ y 1 y 2 ⋮ y n ] , β = [ β 1 β 2 ⋮ β p ] , ε = [ ε 1 ε 2 ⋮ ε n ] \mathbf{X}^\top =\begin{bmatrix}

\mathbf{x}_1^{\top} \\

\mathbf{x}_2^{\top} \\

\vdots \\

\mathbf{x}_n^{\top}

\end{bmatrix}=\begin{bmatrix}

1 & x_{11} & \cdots & x_{1 p} \\

1 & x_{21} & \cdots & x_{2 p} \\

\vdots & \vdots & \ddots & \vdots \\

1 & x_{n 1} & \cdots & x_{n p}

\end{bmatrix}, \quad \mathbf{y}=\begin{bmatrix}

y_1 \\

y_2 \\

\vdots \\

y_n

\end{bmatrix}, \quad

\boldsymbol{\beta}=\begin{bmatrix}

\beta_1 \\

\beta_2 \\

\vdots \\

\beta_p

\end{bmatrix}, \quad \boldsymbol{\varepsilon}=\begin{bmatrix}

\varepsilon_1 \\

\varepsilon_2 \\

\vdots \\

\varepsilon_n

\end{bmatrix}

X ⊤ = x 1 ⊤ x 2 ⊤ ⋮ x n ⊤ = 1 1 ⋮ 1 x 11 x 21 ⋮ x n 1 ⋯ ⋯ ⋱ ⋯ x 1 p x 2 p ⋮ x n p , y = y 1 y 2 ⋮ y n , β = β 1 β 2 ⋮ β p , ε = ε 1 ε 2 ⋮ ε n

1.2 Logistic regression

假设有 n n n { ( X i , y i ) } i = 1 n \{(X_i, y_i)\}_{i=1}^n {( X i , y i ) } i = 1 n y i ∈ { 0 , 1 } y_i \in \{0, 1\} y i ∈ { 0 , 1 } X i X_i X i

Pr ( y i = 1 ∣ X i ) = p i , Pr ( y i = 0 ∣ X i ) = 1 − p i \operatorname{Pr}(y_i = 1|X_i) = p_i, \quad \operatorname{Pr}(y_i = 0|X_i) = 1 - p_i

Pr ( y i = 1∣ X i ) = p i , Pr ( y i = 0∣ X i ) = 1 − p i

Logistic regression 的分类公式是:

p i = sigmoid ( X i ⊤ β ) = e X i ⊤ β 1 + e X i ⊤ β = 1 1 + e − X i ⊤ β p_i=\operatorname{s igmoid}\left(X_i^{\top} \beta\right)=\frac{e^{X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}}=\frac{1}{1+e^{-X_i^{\top} \beta}}

p i = sigmoid ( X i ⊤ β ) = 1 + e X i ⊤ β e X i ⊤ β = 1 + e − X i ⊤ β 1

设每个样本的真实标签为 y i ∗ y_i^* y i ∗

Pr ( y i = y i ∗ ∣ X i ) = p i y i ∗ ( 1 − p i ) 1 − y i ∗ = ( e X i ⊤ β 1 + e X i ⊤ β ) y i ∗ ( 1 − e X i ⊤ β 1 + e X i ⊤ β ) 1 − y i ∗ = e y i ∗ X i ⊤ β 1 + e X i ⊤ β \operatorname{Pr}\left(y_i=y_i^* | X_i\right)=p_i^{y_i^*}\left(1-p_i\right)^{1-y_i^*}=\left(\frac{e^{X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}}\right)^{y_i^*}\left(1-\frac{e^{X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}}\right)^{1-y_i^*}=\frac{e^{y_i^* X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}}

Pr ( y i = y i ∗ ∣ X i ) = p i y i ∗ ( 1 − p i ) 1 − y i ∗ = ( 1 + e X i ⊤ β e X i ⊤ β ) y i ∗ ( 1 − 1 + e X i ⊤ β e X i ⊤ β ) 1 − y i ∗ = 1 + e X i ⊤ β e y i ∗ X i ⊤ β

采用最大似然估计(MLE) ,目标是所有样本全部预测正确的概率最大,即

Pr ( β ) = ∏ i = 1 n p i y i ∗ ( 1 − p i ) 1 − y i ∗ = ∏ i = 1 n e y i ∗ X i ⊤ β 1 + e X i ⊤ β \operatorname{Pr}(\beta)=\prod_{i=1}^n p_i^{y_i^*}\left(1-p_i\right)^{1-y_i^*}=\prod_{i=1}^n \frac{e^{y_i^* X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}}

Pr ( β ) = i = 1 ∏ n p i y i ∗ ( 1 − p i ) 1 − y i ∗ = i = 1 ∏ n 1 + e X i ⊤ β e y i ∗ X i ⊤ β

取对数,得

log Pr ( β ) = ∑ i = 1 n [ y i ∗ X i ⊤ β − log ( 1 + exp X i ⊤ β ) ] \log \operatorname{Pr}(\beta)=\sum_{i=1}^n\left[y_i^* X_i^{\top} \beta-\log \left(1+\exp X_i^{\top} \beta\right)\right]

log Pr ( β ) = i = 1 ∑ n [ y i ∗ X i ⊤ β − log ( 1 + exp X i ⊤ β ) ]

即优化目标变为

arg max β Pr ( β ) ≡ arg max β log Pr ( β ) \argmax_\beta \operatorname{Pr}(\beta) \equiv \argmax_\beta \log \operatorname{Pr}(\beta)

β arg max Pr ( β ) ≡ β arg max log Pr ( β )

1.3 Classification

如果分类标签 y i ∈ { + 1 , − 1 } y_i \in \{+1, -1\} y i ∈ { + 1 , − 1 }

Pr ( y i = + 1 ∣ X i ) = 1 1 + e − X i ⊤ β , Pr ( y i = − 1 ∣ X i ) = 1 1 + e X i ⊤ β \operatorname{Pr}(y_i = +1|X_i) = \frac{1}{1+e^{-X_i^{\top} \beta}}, \quad

\operatorname{Pr}(y_i = -1|X_i) = \frac{1}{1+e^{X_i^{\top} \beta}}

Pr ( y i = + 1∣ X i ) = 1 + e − X i ⊤ β 1 , Pr ( y i = − 1∣ X i ) = 1 + e X i ⊤ β 1

合并起来,得到

p ( y i ) = 1 1 + e − y i X i ⊤ β p\left(y_i\right)=\frac{1}{1+e^{-y_iX_i^{\top} \beta}}

p ( y i ) = 1 + e − y i X i ⊤ β 1

1.4 Perceptron

一个基础的感知机,可以表示为:

y i = sign ( X i ⊤ β ) y_i=\operatorname{sign}\left(X_i^{\top} \beta\right)

y i = sign ( X i ⊤ β )

其中 sign ( ⋅ ) \operatorname{sign}(\cdot) sign ( ⋅ )

1.5 Three models

Generative models

给定观测变量 X X X Y Y Y 生成式模型建模联合概率 p θ ( X , Y ) p_\theta(X, Y) p θ ( X , Y ) 。用生成式模型做分类时,使用条件概率公式:

p θ ( Y ∣ X ) = p θ ( X , Y ) p θ ( X ) = p θ ( X , Y ) ∑ Y ′ p θ ( X , Y ′ ) p_\theta(Y | X)=\frac{p_\theta(X, Y)}{p_\theta(X)}=\frac{p_\theta(X, Y)}{\sum_{Y^{\prime}} p_\theta\left(X, Y^{\prime}\right)}

p θ ( Y ∣ X ) = p θ ( X ) p θ ( X , Y ) = ∑ Y ′ p θ ( X , Y ′ ) p θ ( X , Y )

假设 g θ g_\theta g θ

h ∼ N ( 0 , I ) h \sim \mathrm{N}(0, I)

h ∼ N ( 0 , I )

期望的输出为:

X = g θ ( h ) + ε X = g_\theta(h) + \varepsilon

X = g θ ( h ) + ε

输出 X X X

p θ ( X ) = ∫ p ( h ) p θ ( X ∣ h ) d h p_\theta(X)=\int p(h) p_\theta(X | h) \mathrm{d} h

p θ ( X ) = ∫ p ( h ) p θ ( X ∣ h ) d h

要增大 log p θ ( X ) \log p_\theta(X) log p θ ( X ) log p ( h ) \log p(h) log p ( h ) log p θ ( X ∣ h ) \log p_\theta(X | h) log p θ ( X ∣ h )

log p ( h ) \log p(h) log p ( h ) h ∼ N ( 0 , I ) h \sim N(0, I) h ∼ N ( 0 , I )

p ( h ) = 1 2 π exp ( − 1 2 ∥ h ∥ 2 ) p(h) = \frac{1}{\sqrt{2\pi}}\exp\left(-\frac{1}{2}\| h \|^2\right)

p ( h ) = 2 π 1 exp ( − 2 1 ∥ h ∥ 2 )

从而

log p ( h ) = − 1 2 ∥ h ∥ 2 + c o n s t a n t \log p(h)=-\frac{1}{2}\|h\|^2+\mathrm{constant}

log p ( h ) = − 2 1 ∥ h ∥ 2 + constant

log p θ ( X ∣ h ) \log p_\theta(X | h) log p θ ( X ∣ h )

X − g θ ( h ) = ε ∼ N ( 0 , σ 2 I ) X-g_\theta(h)=\varepsilon \sim \mathrm{N}\left(0, \sigma^2 \mathbf{I}\right)

X − g θ ( h ) = ε ∼ N ( 0 , σ 2 I )

那么

X ∣ h ∼ N ( g θ ( h ) , σ 2 I ) X | h \sim \mathrm{N}\left(g_\theta(h), \sigma^2 \mathbf{I}\right)

X ∣ h ∼ N ( g θ ( h ) , σ 2 I )

从而

log p θ ( X ∣ h ) = − ∥ X − g θ ( h ) ∥ 2 2 σ 2 + c o n s t a n t \log p_\theta(X | h)=-\frac{\left\|X-g_\theta(h)\right\|^2}{2 \sigma^2}+\mathrm{constant}

log p θ ( X ∣ h ) = − 2 σ 2 ∥ X − g θ ( h ) ∥ 2 + constant

Discriminative models

给定观测变量 X X X Y Y Y 判别式模型建模条件概率 P ( Y ∣ X ) P(Y|X) P ( Y ∣ X ) . 设输入为 X X X K K K f θ ( X ) f_\theta(X) f θ ( X )

p θ ( y = k ∣ X ) = p k = 1 Z ( θ ) exp ( f θ ( k ) ( X ) ) p_\theta(y=k | X)= p_k = \frac{1}{Z(\theta)} \exp (f_\theta^{(k)}(X))

p θ ( y = k ∣ X ) = p k = Z ( θ ) 1 exp ( f θ ( k ) ( X ))

其中

Z ( θ ) = ∑ k exp ( f θ ( k ) ( X ) ) Z(\theta) = \sum_k \exp (f_\theta^{(k)}(X))

Z ( θ ) = k ∑ exp ( f θ ( k ) ( X ))

Descriptive models

描述式模型建模输入数据分布 p θ ( X ) p_\theta(X) p θ ( X ) . 可以表示为:

p θ ( X ) = 1 Z ( θ ) exp ( f θ ( X ) ) p_\theta(X)=\frac{1}{Z(\theta)} \exp(f_\theta(X))

p θ ( X ) = Z ( θ ) 1 exp ( f θ ( X ))

其中

Z ( θ ) = ∫ exp ( f θ ( x ) ) d x Z(\theta) = \int \exp (f_\theta(x)) \mathrm{d} x

Z ( θ ) = ∫ exp ( f θ ( x )) d x

1.7 Loss functions

为了从数据集 { ( X i , y i ) } i = 1 n \left\{\left(X_i, y_i\right)\right\}_{i=1}^n { ( X i , y i ) } i = 1 n β \beta β

L ( β ) = ∑ i = 1 n L ( y i , X i ⊤ β ) \mathscr{L}(\beta)=\sum_{i=1}^n L\left(y_i, X_i^{\top} \beta\right)

L ( β ) = i = 1 ∑ n L ( y i , X i ⊤ β )

其中 L ( y i , X i ⊤ β ) L\left(y_i, X_i^{\top} \beta\right) L ( y i , X i ⊤ β )

Loss function for least squares regression

在线性回归中,我们常使用最小平方损失:

L ( y i , X i ⊤ β ) = ( y i − X i ⊤ β ) 2 L(y_i, X_i^{\top} \beta)=\left(y_i-X_i^{\top} \beta\right)^2

L ( y i , X i ⊤ β ) = ( y i − X i ⊤ β ) 2

这一损失函数同样可以从最大似然估计中得到. 先假设误差服从正态 分布,即

y i − X i ⊤ β = ε i ∼ N ( 0 , σ 2 I ) y_i-X_i^{\top} \beta=\varepsilon_i \sim \mathrm{N}(0,\sigma^2I)

y i − X i ⊤ β = ε i ∼ N ( 0 , σ 2 I )

那么有

y i ∣ X i ∼ N ( X i ⊤ β , σ 2 I ) y_i|X_i \sim \mathrm{N}(X_i^\top \beta, \sigma^2I)

y i ∣ X i ∼ N ( X i ⊤ β , σ 2 I )

即

P r ( y i ∣ X i , β ) = 1 2 π σ exp [ − ( y i − X i ⊤ β ) 2 2 σ 2 ] \mathrm{Pr}(y_i|X_i, \beta) = \frac{1}{\sqrt{2\pi} \sigma} \exp\left[-\frac{(y_i - X_i^\top \beta)^2}{2\sigma^2}\right]

Pr ( y i ∣ X i , β ) = 2 π σ 1 exp [ − 2 σ 2 ( y i − X i ⊤ β ) 2 ]

取负对数似然为损失函数,有:

L ( y i , X i ⊤ β ) = − log Pr ( y i ∣ X i , β ) = ( y i − X i ⊤ β ) 2 2 σ 2 + c o n s t a n t L(y_i, X_i^{\top} \beta)= -\log \operatorname{Pr}(y_i |X_i, \beta) = \frac{(y_i-X_i^{\top} \beta)^2}{2\sigma^2}+\mathrm{constant}

L ( y i , X i ⊤ β ) = − log Pr ( y i ∣ X i , β ) = 2 σ 2 ( y i − X i ⊤ β ) 2 + constant

从而

L ( β ) = ∑ i = 1 n L ( y i , X i ⊤ β ) = 2 σ 2 ∑ i = 1 n [ ( y i − X i ⊤ β ) 2 2 σ 2 + c o n s t a n t ] = ∑ i = 1 n ( y i − X i ⊤ β ) 2 \mathscr{L}(\beta)=\sum_{i=1}^n L(y_i, X_i^{\top} \beta)=2\sigma^2 \sum_{i=1}^n\left[\frac{(y_i-X_i^{\top} \beta)^2}{2\sigma^2}+\mathrm{constant}\right]=\sum_{i=1}^n(y_i-X_i^{\top} \beta)^2

L ( β ) = i = 1 ∑ n L ( y i , X i ⊤ β ) = 2 σ 2 i = 1 ∑ n [ 2 σ 2 ( y i − X i ⊤ β ) 2 + constant ] = i = 1 ∑ n ( y i − X i ⊤ β ) 2

因此,最小二乘法的本质是最大似然估计.

Loss function for robust linear regression

考虑到最小平方损失对 outliers 较为敏感,可以将损失函数中的平方换为绝对值,即:

L ( y i , X i ⊤ β ) = ∣ y i − X i ⊤ β ∣ L(y_i, X_i^{\top} \beta)=| y_i-X_i^{\top} \beta |

L ( y i , X i ⊤ β ) = ∣ y i − X i ⊤ β ∣

将两种损失结合,得到 Huber loss:

L ( y i , X i ⊤ β ) = { 1 2 ( y i − X i ⊤ β ) 2 , if ∣ y i − X i ⊤ β ∣ ≤ δ δ ∣ y i − X i ⊤ β ∣ − δ 2 2 , otherwise L(y_i, X_i^{\top} \beta)= \begin{cases}\frac{1}{2}(y_i-X_i^{\top} \beta)^2, & \text { if }|y_i-X_i^{\top} \beta| \leq \delta \\ \delta|y_i-X_i^{\top} \beta|-\frac{\delta^2}{2}, & \text { otherwise }\end{cases}

L ( y i , X i ⊤ β ) = { 2 1 ( y i − X i ⊤ β ) 2 , δ ∣ y i − X i ⊤ β ∣ − 2 δ 2 , if ∣ y i − X i ⊤ β ∣ ≤ δ otherwise

其中 δ \delta δ δ \delta δ

Loss function for logistic regression with 0/1 responses

前面推导过,当标签 y i ∈ { 0 , 1 } y_i \in \{0, 1\} y i ∈ { 0 , 1 }

Pr ( y i ∣ X i , β ) = exp ( y i X i ⊤ β ) 1 + exp ( X i ⊤ β ) \operatorname{Pr}\left(y_i | X_i, \beta\right) =\frac{\exp (y_i X_i^\top \beta)}{1+\exp (X_i^{\top} \beta)}

Pr ( y i ∣ X i , β ) = 1 + exp ( X i ⊤ β ) exp ( y i X i ⊤ β )

为了最大化这一概率,取负对数似然为损失函数:

L ( y i , X i ⊤ β ) = − log Pr ( y i ∣ X i , β ) = − [ y i X i ⊤ β − log ( 1 + exp ( X i ⊤ β ) ) ] L(y_i, X_i^{\top} \beta)= -\log \operatorname{Pr}(y_i |X_i, \beta) = -\left[y_i X_i^{\top} \beta-\log (1+\exp (X_i^{\top} \beta))\right]

L ( y i , X i ⊤ β ) = − log Pr ( y i ∣ X i , β ) = − [ y i X i ⊤ β − log ( 1 + exp ( X i ⊤ β )) ]

Loss function for logistic regression with ± \pm ±

前面推导过,当标签 y i ∈ { + 1 , − 1 } y_i \in \{+1, -1\} y i ∈ { + 1 , − 1 }

Pr ( y i ∣ X i , β ) = 1 1 + exp ( − y i X i ⊤ β ) \operatorname{Pr}\left(y_i | X_i, \beta\right) =\frac{1}{1+\exp (-y_i X_i^{\top} \beta)}

Pr ( y i ∣ X i , β ) = 1 + exp ( − y i X i ⊤ β ) 1

同样取负对数似然为损失函数,有:

L ( y i , X i ⊤ β ) = − log Pr ( y i ∣ X i , β ) = log [ 1 + exp ( − y i X i ⊤ β ) ] L(y_i, X_i^{\top} \beta)= -\log \operatorname{Pr}(y_i |X_i, \beta) = \log \left[1+\exp \left(-y_i X_i^{\top} \beta\right)\right]

L ( y i , X i ⊤ β ) = − log Pr ( y i ∣ X i , β ) = log [ 1 + exp ( − y i X i ⊤ β ) ]

该损失被称为 logistic loss.

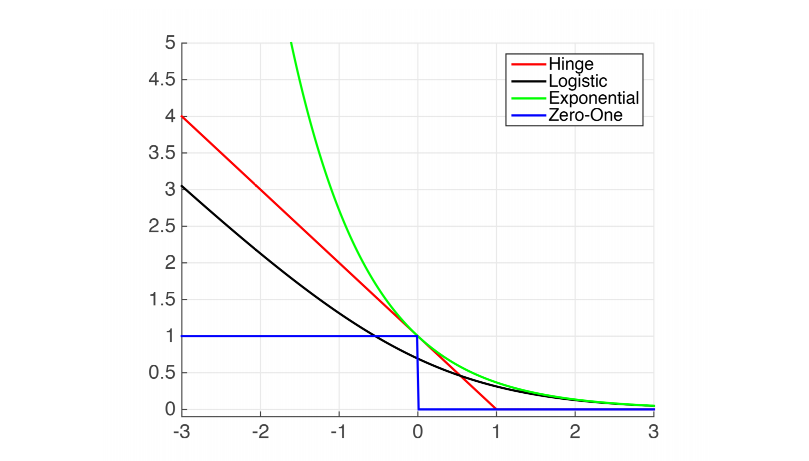

Loss functions for classification

对于 y i ∈ { + 1 , − 1 } y_i \in \{+1, -1\} y i ∈ { + 1 , − 1 }

Logistic loss = log ( 1 + exp ( − y i X i ⊤ β ) ) , Exponential loss = exp ( − y i X i ⊤ β ) , Hinge loss = max ( 0 , 1 − y i X i ⊤ β ) , Zero-one loss = 1 ( y i X i ⊤ β < 0 ) \begin{aligned}

& \text { Logistic loss }=\log \left(1+\exp \left(-y_i X_i^{\top} \beta\right)\right), \\

& \text { Exponential loss }=\exp \left(-y_i X_i^{\top} \beta\right), \\

& \text { Hinge loss }=\max \left(0,1-y_i X_i^{\top} \beta\right), \\

& \text { Zero-one loss }=1\left(y_i X_i^{\top} \beta<0\right)

\end{aligned}

Logistic loss = log ( 1 + exp ( − y i X i ⊤ β ) ) , Exponential loss = exp ( − y i X i ⊤ β ) , Hinge loss = max ( 0 , 1 − y i X i ⊤ β ) , Zero-one loss = 1 ( y i X i ⊤ β < 0 )

图中横轴表示 m i = y i X i ⊤ β m_i = y_iX_i^\top\beta m i = y i X i ⊤ β L ( y i , X i ⊤ β ) L(y_i, X_i^\top\beta) L ( y i , X i ⊤ β ) y i y_i y i X i ⊤ β X_i^\top\beta X i ⊤ β y i = + 1 y_i = +1 y i = + 1 X i ⊤ β X_i^\top\beta X i ⊤ β y i = − 1 y_i = -1 y i = − 1 X i ⊤ β X_i^\top\beta X i ⊤ β m i = y i X i ⊤ β m_i = y_iX_i^\top\beta m i = y i X i ⊤ β m i m_i m i

1.8 Least Squares

考虑线性模型 Y = X β + ε Y = X\beta + \varepsilon Y = Xβ + ε

β ^ = arg min β L ( β ) = arg min β ∥ Y − X β ∥ 2 \hat{\beta}=\argmin_\beta L(\beta) = \argmin_\beta\Vert Y-X \beta\Vert^2

β ^ = β arg min L ( β ) = β arg min ∥ Y − Xβ ∥ 2

考虑到 L ( β ) L(\beta) L ( β ) β = β ^ \beta = \hat{\beta} β = β ^

∂ ∂ β L ( β ) = 0 \frac{\partial}{\partial \beta} L(\beta) = 0

∂ β ∂ L ( β ) = 0

下面对 L ( β ) L(\beta) L ( β )

L ( β ) = ( Y − X β ) ⊤ ( Y − X β ) = Y ⊤ Y − Y ⊤ X β − β ⊤ X ⊤ Y + β ⊤ X ⊤ X β \begin{aligned}

L(\beta) & = (Y - X\beta)^\top(Y - X\beta) \\

& = Y^{\top} Y-Y^{\top} X \beta-\beta^{\top} X^{\top} Y+\beta^{\top} X^{\top} X \beta \\

\end{aligned}

L ( β ) = ( Y − Xβ ) ⊤ ( Y − Xβ ) = Y ⊤ Y − Y ⊤ Xβ − β ⊤ X ⊤ Y + β ⊤ X ⊤ Xβ

考虑到 β ⊤ X ⊤ Y \beta^{\top} X^{\top} Y β ⊤ X ⊤ Y β ⊤ X ⊤ Y = Y ⊤ X β \beta^{\top} X^{\top} Y=Y^{\top} X \beta β ⊤ X ⊤ Y = Y ⊤ Xβ

L ( β ) = Y ⊤ Y − 2 Y ⊤ X β + β ⊤ X ⊤ X β L(\beta) = Y^{\top} Y-2 Y^{\top} X \beta+\beta^{\top} X^{\top} X \beta

L ( β ) = Y ⊤ Y − 2 Y ⊤ Xβ + β ⊤ X ⊤ Xβ

假设 A A A

∂ ∂ β ( β ⊤ A β ) = 2 A β \frac{\partial}{\partial \beta}\left(\beta^{\top} A \beta\right)=2 A \beta ∂ β ∂ ( β ⊤ A β ) = 2 A β

∂ ∂ β ( b ⊤ β ) = b \frac{\partial}{\partial \beta}\left(b^{\top} \beta\right)=b ∂ β ∂ ( b ⊤ β ) = b

根据矩阵求导法则,有:

∂ L ∂ β = − 2 X ⊤ Y + 2 X ⊤ X β \frac{\partial L}{\partial \beta}=-2 X^{\top} Y+2 X^{\top} X \beta

∂ β ∂ L = − 2 X ⊤ Y + 2 X ⊤ Xβ

令 ∂ L ∂ β = 0 \dfrac{\partial L}{\partial \beta} = 0 ∂ β ∂ L = 0

2 X ⊤ ( Y − X β ) = 0 \begin{equation}

2 X^\top(Y-X \beta)=0

\end{equation}

2 X ⊤ ( Y − Xβ ) = 0

如果 X X X X ⊤ X X^\top X X ⊤ X

β ^ = ( X ⊤ X ) − 1 X ⊤ Y \hat{\beta}=\left(X^\top X\right)^{-1} X^\top Y

β ^ = ( X ⊤ X ) − 1 X ⊤ Y

因此,

Y ^ = X β ^ = X ( X ⊤ X ) − 1 X ⊤ Y \hat{Y}=X \hat{\beta}=X\left(X^\top X\right)^{-1} X^\top Y

Y ^ = X β ^ = X ( X ⊤ X ) − 1 X ⊤ Y

可以看出,最小二乘法的实质是将 Y Y Y X X X X β ^ X\hat{\beta} X β ^ ε = Y − X β \varepsilon = Y - X\beta ε = Y − Xβ

Distribution of β ^ \hat{\beta} β ^

下面讨论训练得到的参数 β ^ \hat{\beta} β ^ β true \beta_\text{true} β true

β ^ = ( X ⊤ X ) − 1 X ⊤ Y = ( X ⊤ X ) − 1 X ⊤ ( X β true + ε ) = β true + ( X ⊤ X ) − 1 X ⊤ ε \begin{aligned}

\hat{\beta} & =(X^\top X)^{-1} X^\top Y \\

& =(X^\top X)^{-1} X^\top(X \beta_{\text {true}}+\varepsilon) \\

& =\beta_{\text {true}}+(X^\top X)^{-1} X^\top \varepsilon

\end{aligned}

β ^ = ( X ⊤ X ) − 1 X ⊤ Y = ( X ⊤ X ) − 1 X ⊤ ( X β true + ε ) = β true + ( X ⊤ X ) − 1 X ⊤ ε

可以看出,β ^ \hat{\beta} β ^

E [ β ^ ] = β true \mathbb{E}[\hat{\beta}]=\beta_{\text {true}}

E [ β ^ ] = β true

协方差矩阵为:

V a r ( β ^ ) = E [ ( β ^ − E [ β ^ ] ) ( β ^ − E [ β ^ ] ) ⊤ ] = E [ ( ( X ⊤ X ) − 1 X ⊤ ε ) ( ( X ⊤ X ) − 1 X ⊤ ε ) ⊤ ] = ( X ⊤ X ) − 1 X ⊤ E [ ε ε ⊤ ] X ( X ⊤ X ) − 1 , ⊤ = ( X ⊤ X ) − 1 X ⊤ ⋅ σ 2 I ⋅ X ( X ⊤ X ) − 1 = σ 2 ( X ⊤ X ) − 1 \begin{aligned}

\mathrm{Var}(\hat{\beta}) & = \mathbb{E}\left[(\hat{\beta} - \mathbb{E}[\hat{\beta}])(\hat{\beta} - \mathbb{E}[\hat{\beta}])^\top\right] \\

& = \mathbb{E}\left[((X^\top X)^{-1} X^\top \varepsilon )((X^\top X)^{-1} X^\top \varepsilon)^\top\right] \\

& = (X^\top X)^{-1} X^\top \mathbb{E}[\varepsilon \varepsilon^\top] X (X^\top X)^{-1, \top} \\

& = (X^\top X)^{-1} X^\top \cdot \sigma^2 I \cdot X (X^\top X)^{-1} \\

& = \sigma^2 (X^\top X)^{-1}

\end{aligned}

Var ( β ^ ) = E [ ( β ^ − E [ β ^ ]) ( β ^ − E [ β ^ ] ) ⊤ ] = E [ (( X ⊤ X ) − 1 X ⊤ ε ) (( X ⊤ X ) − 1 X ⊤ ε ) ⊤ ] = ( X ⊤ X ) − 1 X ⊤ E [ ε ε ⊤ ] X ( X ⊤ X ) − 1 , ⊤ = ( X ⊤ X ) − 1 X ⊤ ⋅ σ 2 I ⋅ X ( X ⊤ X ) − 1 = σ 2 ( X ⊤ X ) − 1

因此,

β ^ ∼ N ( β true , σ 2 ( X ⊤ X ) − 1 ) \hat{\beta} \sim N\left(\beta_\text{true}, \sigma^2 (X^\top X)^{-1}\right)

β ^ ∼ N ( β true , σ 2 ( X ⊤ X ) − 1 )

1.9 Kullback-Leibler divergence and cross entropy

Coding and entropy

一个分布 p ( x ) p(x) p ( x )

H ( p ) = E p [ − log p ( X ) ] = ∑ x p ( x ) [ − log p ( x ) ] H(p)=\mathbb{E}_p[-\log p(X)]=\sum_x p(x)[-\log p(x)]

H ( p ) = E p [ − log p ( X )] = x ∑ p ( x ) [ − log p ( x )]

熵等于用 − log p ( x ) -\log p(x) − log p ( x )

Kullback-Leibler divergence and cross entropy

交叉熵,是对于分布 p ( x ) p(x) p ( x ) q ( x ) q(x) q ( x ) − log q ( x ) -\log q(x) − log q ( x )

C E ( p ∣ q ) = E p [ − log q ( X ) ] = − ∑ x p ( x ) log q ( x ) \mathrm{CE}(p | q) = \mathbb{E}_p[-\log q(X)]=-\sum_x p(x) \log q(x)

CE ( p ∣ q ) = E p [ − log q ( X )] = − x ∑ p ( x ) log q ( x )

不难得到,交叉熵不小于熵,即:

C E ( p ∣ q ) ≥ H ( p ) \mathrm{CE}(p | q) \ge H(p)

CE ( p ∣ q ) ≥ H ( p )

当且仅当 p ( x ) = q ( x ) p(x) = q(x) p ( x ) = q ( x )

定义 KL 散度为交叉熵和熵的差,即:

K L ( p ∣ q ) = C E ( p ∣ q ) − H ( p ) = E p [ log p ( X ) q ( X ) ] = ∑ x p ( x ) log p ( x ) q ( x ) ≥ 0 \mathrm{KL}(p | q) = \mathrm{CE}(p | q) - H(p) = \mathbb{E}_p\left[\log \frac{p(X)}{q(X)}\right] = \sum_x p(x) \log \frac{p(x)}{q(x)} \ge 0

KL ( p ∣ q ) = CE ( p ∣ q ) − H ( p ) = E p [ log q ( X ) p ( X ) ] = x ∑ p ( x ) log q ( x ) p ( x ) ≥ 0

当且仅当 p ( x ) = q ( x ) p(x) = q(x) p ( x ) = q ( x ) p ( x ) p(x) p ( x ) q ( x ) q(x) q ( x )

KL ( p ∣ q ) ≠ KL ( q ∣ p ) \operatorname{KL}(p | q) \neq \operatorname{KL}(q | p)

KL ( p ∣ q ) = KL ( q ∣ p )

对于条件概率分布,KL 散度的定义是:

KL ( p ( y ∣ x ) ∣ q ( y ∣ x ) ) = def E p ( x , y ) [ log p ( Y ∣ X ) q ( Y ∣ X ) ] = E p ( x ) E p ( y ∣ x ) [ log p ( Y ∣ X ) q ( Y ∣ X ) ] \operatorname{KL}(p(y | x) | q(y | x)) \stackrel{\text { def }}{=} \mathbb{E}_{p(x, y)}\left[\log \frac{p(Y | X)}{q(Y | X)}\right] = \mathbb{E}_{p(x)} \mathbb{E}_{p(y | x)}\left[\log \frac{p(Y | X)}{q(Y | X)}\right]

KL ( p ( y ∣ x ) ∣ q ( y ∣ x )) = def E p ( x , y ) [ log q ( Y ∣ X ) p ( Y ∣ X ) ] = E p ( x ) E p ( y ∣ x ) [ log q ( Y ∣ X ) p ( Y ∣ X ) ]

对于多变量分布,KL 散度为:

KL ( p ( x , y ) ∣ q ( x , y ) ) = KL ( p ( x ) ∣ q ( x ) ) + KL ( p ( y ∣ x ) ∣ q ( y ∣ x ) ) \begin{equation}

\operatorname{KL}(p(x, y) | q(x, y))=\operatorname{KL}(p(x) | q(x))+\operatorname{KL}(p(y | x) | q(y | x))

\end{equation}

KL ( p ( x , y ) ∣ q ( x , y )) = KL ( p ( x ) ∣ q ( x )) + KL ( p ( y ∣ x ) ∣ q ( y ∣ x ))

1.10 Maximum likelihood

下面证明最大似然估计的正确性. 优化目标是:

L ( θ ) = 1 n ∑ i = 1 n log p θ ( y i ∣ X i ) \mathscr{L}(\theta)=\frac{1}{n} \sum_{i=1}^n \log p_\theta(y_i | X_i)

L ( θ ) = n 1 i = 1 ∑ n log p θ ( y i ∣ X i )

设 P data ( X , y ) P_\text{data}(X, y) P data ( X , y )

max θ L ( θ ) = max θ 1 n ∑ i = 1 n log p θ ( y i ∣ X i ) = max θ E P data [ log p θ ( y ∣ X ) ] = min θ { − E P data [ log p θ ( y ∣ X ) ] } \begin{aligned}

\max_\theta \mathscr{L}(\theta) & = \max _\theta \frac{1}{n} \sum_{i=1}^n \log p_\theta(y_i | X_i) \\

& = \max _\theta \mathbb{E}_{P_{\text {data}}}\left[\log p_\theta(y | X)\right] \\

& = \min _\theta\left\{-\mathbb{E}_{P_{\text {data}}}\left[\log p_\theta(y | X)\right]\right\} \\

\end{aligned}

θ max L ( θ ) = θ max n 1 i = 1 ∑ n log p θ ( y i ∣ X i ) = θ max E P data [ log p θ ( y ∣ X ) ] = θ min { − E P data [ log p θ ( y ∣ X ) ] }

由于 E P data [ log P data ( y ∣ X ) ] \mathbb{E}_{P_{\text {data}}}\left[\log P_{\text {data}}(y | X)\right] E P data [ log P data ( y ∣ X ) ] θ \theta θ

max θ L ( θ ) = min θ E P data [ log P data ( y ∣ X ) ] − E P data [ log p θ ( y ∣ X ) ] = min θ K L ( P data ( y ∣ X ) ∣ p θ ( y ∣ X ) ) \begin{aligned}

\max_\theta \mathscr{L}(\theta) & = \min _\theta \mathbb{E}_{P_{\text {data }}}\left[\log P_{\text {data}}(y | X)\right]-\mathbb{E}_{P_{\text {data}}}\left[\log p_\theta(y | X)\right] \\

& = \min _\theta \mathrm{KL}\left(P_{\text {data}}(y | X) | p_\theta(y | X)\right)

\end{aligned}

θ max L ( θ ) = θ min E P data [ log P data ( y ∣ X ) ] − E P data [ log p θ ( y ∣ X ) ] = θ min KL ( P data ( y ∣ X ) ∣ p θ ( y ∣ X ) )

可以看出,最大似然估计的实质是:最小化预测分布和真实数据分布的 KL 散度,即让预测分布尽可能接近真实数据分布 .

1.11 Kullback-Leibler of conditionals

下面证明多变量分布的 KL 散度公式,即式 (2).

K L ( p ( x , y ) ∣ q ( x , y ) ) = E p [ log p ( x , y ) q ( x , y ) ] = E p [ log p ( x ) p ( y ∣ x ) q ( x ) q ( y ∣ x ) ] = E p [ log p ( x ) q ( x ) ] + E p [ log p ( y ∣ x ) q ( y ∣ x ) ] = K L ( p ( x ) ∣ q ( x ) ) + K L ( p ( y ∣ x ) ∣ q ( y ∣ x ) ) \begin{aligned}

\mathrm{KL}(p(x, y) | q(x, y)) & =\mathbb{E}_p\left[\log \frac{p(x, y)}{q(x, y)}\right] \\

& =\mathbb{E}_p\left[\log \frac{p(x) p(y | x)}{q(x) q(y | x)}\right] \\

& =\mathbb{E}_p\left[\log \frac{p(x)}{q(x)}\right]+\mathbb{E}_p\left[\log \frac{p(y | x)}{q(y | x)}\right] \\

& =\mathrm{KL}(p(x) | q(x))+\mathrm{KL}(p(y | x) | q(y | x))

\end{aligned}

KL ( p ( x , y ) ∣ q ( x , y )) = E p [ log q ( x , y ) p ( x , y ) ] = E p [ log q ( x ) q ( y ∣ x ) p ( x ) p ( y ∣ x ) ] = E p [ log q ( x ) p ( x ) ] + E p [ log q ( y ∣ x ) p ( y ∣ x ) ] = KL ( p ( x ) ∣ q ( x )) + KL ( p ( y ∣ x ) ∣ q ( y ∣ x ))

1.13 Gradient of log-likelihood

Discriminative model

前面提到过,判别式模型的输出为:

p θ ( y = k ∣ X ) = p k = 1 Z ( θ ) exp ( f θ ( k ) ( X ) ) p_\theta(y=k | X)=p_k=\frac{1}{Z(\theta)} \exp (f_\theta^{(k)}(X))

p θ ( y = k ∣ X ) = p k = Z ( θ ) 1 exp ( f θ ( k ) ( X ))

其中

Z ( θ ) = ∑ k exp ( f θ ( k ) ( X ) ) Z(\theta)=\sum_k \exp (f_\theta^{(k)}(X))

Z ( θ ) = k ∑ exp ( f θ ( k ) ( X ))

对对数概率求梯度,有:

∂ ∂ θ log p θ ( y ∣ X ) = ∂ ∂ θ f θ ( k ) ( X ) − ∂ ∂ θ log Z ( θ ) = ∂ ∂ θ f θ ( k ) ( X ) − 1 Z ( θ ) ∂ ∂ θ Z ( θ ) = ∂ ∂ θ f θ ( k ) ( X ) − 1 Z ( θ ) ∂ ∂ θ [ ∑ k ′ exp ( f θ ( k ′ ) ( X ) ) ] = ∂ ∂ θ f θ ( k ) ( X ) − [ ∑ k ′ exp ( f θ ( k ′ ) ( X ) ) Z ( θ ) ∂ ∂ θ f θ ( k ′ ) ( X ) ] = ∂ ∂ θ f θ ( k ) ( X ) − [ ∑ k ′ p k ′ ∂ ∂ θ f θ ( k ′ ) ( X ) ] = ∑ k ′ ( 1 ( y = k ′ ) − p k ′ ) ∂ ∂ θ f θ ( k ′ ) ( X ) = ∂ ∂ θ f θ ( X ) ⊤ ( Y − p ) = ∂ ∂ θ f θ ( X ) ⊤ ( Y − E θ ( Y ∣ X ) ) \begin{aligned}

\frac{\partial}{\partial \theta} \log p_\theta(y | X) & =\frac{\partial}{\partial \theta} f_\theta^{(k)}(X)-\frac{\partial}{\partial \theta} \log Z(\theta) \\

& =\frac{\partial}{\partial \theta} f_\theta^{(k)}(X)-\frac{1}{Z(\theta)} \frac{\partial}{\partial \theta} Z(\theta) \\

& =\frac{\partial}{\partial \theta} f_\theta^{(k)}(X)-\frac{1}{Z(\theta)} \frac{\partial}{\partial \theta}\left[\sum_{k^{\prime}} \exp (f_\theta^{(k^{\prime})}(X))\right] \\

& =\frac{\partial}{\partial \theta} f_\theta^{(k)}(X)-\left[\sum_{k^{\prime}} \frac{\exp (f_\theta^{(k^{\prime})}(X))}{Z(\theta)} \frac{\partial}{\partial \theta} f_\theta^{(k^{\prime})}(X)\right] \\

& =\frac{\partial}{\partial \theta} f_\theta^{(k)}(X)-\left[\sum_{k^{\prime}} p_{k^{\prime}} \frac{\partial}{\partial \theta} f_\theta^{(k^{\prime})}(X)\right] \\

& =\sum_{k^{\prime}}\left(1(y=k^{\prime})-p_{k^{\prime}}\right) \frac{\partial}{\partial \theta} f_\theta^{(k^{\prime})}(X) \\

& =\frac{\partial}{\partial \theta} f_\theta(X)^{\top}(Y-p) \\

& =\frac{\partial}{\partial \theta} f_\theta(X)^{\top}\left(Y-\mathbb{E}_\theta(Y | X)\right)

\end{aligned}

∂ θ ∂ log p θ ( y ∣ X ) = ∂ θ ∂ f θ ( k ) ( X ) − ∂ θ ∂ log Z ( θ ) = ∂ θ ∂ f θ ( k ) ( X ) − Z ( θ ) 1 ∂ θ ∂ Z ( θ ) = ∂ θ ∂ f θ ( k ) ( X ) − Z ( θ ) 1 ∂ θ ∂ [ k ′ ∑ exp ( f θ ( k ′ ) ( X )) ] = ∂ θ ∂ f θ ( k ) ( X ) − [ k ′ ∑ Z ( θ ) exp ( f θ ( k ′ ) ( X )) ∂ θ ∂ f θ ( k ′ ) ( X ) ] = ∂ θ ∂ f θ ( k ) ( X ) − [ k ′ ∑ p k ′ ∂ θ ∂ f θ ( k ′ ) ( X ) ] = k ′ ∑ ( 1 ( y = k ′ ) − p k ′ ) ∂ θ ∂ f θ ( k ′ ) ( X ) = ∂ θ ∂ f θ ( X ) ⊤ ( Y − p ) = ∂ θ ∂ f θ ( X ) ⊤ ( Y − E θ ( Y ∣ X ) )

其中 Y Y Y y y y

Y = [ Y 1 , Y 2 , … , Y n ] , Y k ′ = { 1 , k ′ = k 0 , k ′ ≠ k Y=\left[Y_1, Y_2, \ldots, Y_n\right], \quad Y_{k^{\prime}}=\left\{\begin{array}{cc}

1, & k^{\prime}=k \\

0, & k^{\prime} \neq k

\end{array}\right.

Y = [ Y 1 , Y 2 , … , Y n ] , Y k ′ = { 1 , 0 , k ′ = k k ′ = k

由此可见,判别式模型从误差中学习(learn from errors) .

Descriptive model

前面提到,描述式模型建模数据分布,即:

p θ ( X ) = 1 Z ( θ ) exp ( f θ ( X ) ) p_\theta(X)=\frac{1}{Z(\theta)} \exp (f_\theta(X))

p θ ( X ) = Z ( θ ) 1 exp ( f θ ( X ))

其中

Z ( θ ) = ∫ exp ( f θ ( x ) ) d x Z(\theta)=\int \exp (f_\theta(x)) \mathrm{d} x

Z ( θ ) = ∫ exp ( f θ ( x )) d x

对对数概率求导,有:

∂ ∂ θ log p θ ( X ) = ∂ ∂ θ f θ ( X ) − ∂ ∂ θ log Z ( θ ) = ∂ ∂ θ f θ ( X ) − 1 Z ( θ ) ∂ ∂ θ Z ( θ ) = ∂ ∂ θ f θ ( X ) − 1 Z ( θ ) ∫ exp ( f θ ( X ) ) ∂ ∂ θ f θ ( X ) d x = ∂ ∂ θ f θ ( X ) − ∫ 1 Z ( θ ) exp ( f θ ( X ) ) ∂ ∂ θ f θ ( X ) d x = ∂ ∂ θ f θ ( X ) − ∑ X p θ ( X ) ∂ ∂ θ f θ ( X ) = ∂ ∂ θ f θ ( X ) − E θ [ ∂ ∂ θ f θ ( X ) ] \begin{aligned}

\frac{\partial}{\partial \theta} \log p_\theta(X) & =\frac{\partial}{\partial \theta} f_\theta(X)-\frac{\partial}{\partial \theta} \log Z(\theta) \\

& =\frac{\partial}{\partial \theta} f_\theta(X)-\frac{1}{Z(\theta)} \frac{\partial}{\partial \theta} Z(\theta) \\

& =\frac{\partial}{\partial \theta} f_\theta(X)-\frac{1}{Z(\theta)} \int \exp (f_\theta(X)) \frac{\partial}{\partial \theta} f_\theta(X) \mathrm{d} x \\

& =\frac{\partial}{\partial \theta} f_\theta(X)-\int \frac{1}{Z(\theta)} \exp \left(f_\theta(X)\right) \frac{\partial}{\partial \theta} f_\theta(X) \mathrm{d} x \\

& =\frac{\partial}{\partial \theta} f_\theta(X)-\sum_X p_\theta(X) \frac{\partial}{\partial \theta} f_\theta(X) \\

& =\frac{\partial}{\partial \theta} f_\theta(X)-\mathbb{E}_\theta\left[\frac{\partial}{\partial \theta} f_\theta(X)\right]

\end{aligned}

∂ θ ∂ log p θ ( X ) = ∂ θ ∂ f θ ( X ) − ∂ θ ∂ log Z ( θ ) = ∂ θ ∂ f θ ( X ) − Z ( θ ) 1 ∂ θ ∂ Z ( θ ) = ∂ θ ∂ f θ ( X ) − Z ( θ ) 1 ∫ exp ( f θ ( X )) ∂ θ ∂ f θ ( X ) d x = ∂ θ ∂ f θ ( X ) − ∫ Z ( θ ) 1 exp ( f θ ( X ) ) ∂ θ ∂ f θ ( X ) d x = ∂ θ ∂ f θ ( X ) − X ∑ p θ ( X ) ∂ θ ∂ f θ ( X ) = ∂ θ ∂ f θ ( X ) − E θ [ ∂ θ ∂ f θ ( X ) ]

Generative model

对于生成式模型 p θ ( h , X ) p_\theta(h, X) p θ ( h , X )

∂ ∂ θ log p θ ( X ) = 1 p θ ( X ) ∂ ∂ θ ∫ p θ ( h , X ) d h = 1 p θ ( X ) ∫ [ ∂ ∂ θ p θ ( h , X ) ] d h = 1 p θ ( X ) ∫ [ ∂ ∂ θ log p θ ( h , X ) ] p θ ( h , X ) d h = ∫ [ ∂ ∂ θ log p θ ( h , X ) ] p θ ( h , X ) p θ ( X ) d h = ∫ [ ∂ ∂ θ log p θ ( h , X ) ] p θ ( h ∣ X ) d h = E p θ ( h ∣ X ) [ ∂ ∂ θ log p θ ( h , X ) ] \begin{aligned}

\frac{\partial}{\partial \theta} \log p_\theta(X) & =\frac{1}{p_\theta(X)} \frac{\partial}{\partial \theta} \int p_\theta(h, X) \mathrm{d} h \\

& =\frac{1}{p_\theta(X)} \int\left[\frac{\partial}{\partial \theta} p_\theta(h, X)\right] \mathrm{d} h \\

& =\frac{1}{p_\theta(X)} \int\left[\frac{\partial}{\partial \theta} \log p_\theta(h, X)\right] p_\theta(h, X) \mathrm{d} h \\

& =\int\left[\frac{\partial}{\partial \theta} \log p_\theta(h, X)\right] \frac{p_\theta(h, X)}{p_\theta(X)} \mathrm{d} h \\

& =\int\left[\frac{\partial}{\partial \theta} \log p_\theta(h, X)\right] p_\theta(h | X) \mathrm{d} h \\

& =\mathbb{E}_{p_\theta(h | X)}\left[\frac{\partial}{\partial \theta} \log p_\theta(h, X)\right]

\end{aligned}

∂ θ ∂ log p θ ( X ) = p θ ( X ) 1 ∂ θ ∂ ∫ p θ ( h , X ) d h = p θ ( X ) 1 ∫ [ ∂ θ ∂ p θ ( h , X ) ] d h = p θ ( X ) 1 ∫ [ ∂ θ ∂ log p θ ( h , X ) ] p θ ( h , X ) d h = ∫ [ ∂ θ ∂ log p θ ( h , X ) ] p θ ( X ) p θ ( h , X ) d h = ∫ [ ∂ θ ∂ log p θ ( h , X ) ] p θ ( h ∣ X ) d h = E p θ ( h ∣ X ) [ ∂ θ ∂ log p θ ( h , X ) ]

其中

p θ ( h , X ) = p ( h ) ⋅ p θ ( X ∣ h ) = N ( h ∣ 0 , I ) ⋅ N ( X ∣ g θ ( h ) , σ 2 I ) p_\theta(h, X) = p(h) \cdot p_\theta(X | h) = \mathrm{N}(h | \mathbf{0}, \mathbf{I}) \cdot \mathrm{N}\left(X | g_\theta(h), \sigma^2 \mathbf{I}\right)

p θ ( h , X ) = p ( h ) ⋅ p θ ( X ∣ h ) = N ( h ∣ 0 , I ) ⋅ N ( X ∣ g θ ( h ) , σ 2 I )

log p θ ( h , X ) = − 1 2 σ 2 ∥ X − g θ ( h ) ∥ 2 − 1 2 ∥ h ∥ 2 + c o n s t a n t \log p_\theta\left(h, X\right)=-\frac{1}{2 \sigma^2}\left\|X-g_\theta\left(h\right)\right\|^2-\frac{1}{2}\left\|h\right\|^2+\mathrm{constant}

log p θ ( h , X ) = − 2 σ 2 1 ∥ X − g θ ( h ) ∥ 2 − 2 1 ∥ h ∥ 2 + constant

Optimizing logistic regression via gradient ascent

在 logistic regression 中,对数似然函数是:

l ( β ) = log L ( β ) = ∑ i = 1 n [ y i X i ⊤ β − log ( 1 + exp X i ⊤ β ) ] l(\beta)=\log L(\beta)=\sum_{i=1}^n\left[y_i X_i^{\top} \beta-\log \left(1+\exp X_i^{\top} \beta\right)\right]

l ( β ) = log L ( β ) = i = 1 ∑ n [ y i X i ⊤ β − log ( 1 + exp X i ⊤ β ) ]

为了最大化 l ( β ) l(\beta) l ( β )

l ′ ( β ) = ∑ i = 1 n [ y i X i − e X i ⊤ β 1 + e X i ⊤ β X i ] = ∑ i = 1 n ( y i − p i ) X i l^{\prime}(\beta)=\sum_{i=1}^n\left[y_i X_i-\frac{e^{X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}} X_i\right]=\sum_{i=1}^n\left(y_i-p_i\right) X_i

l ′ ( β ) = i = 1 ∑ n [ y i X i − 1 + e X i ⊤ β e X i ⊤ β X i ] = i = 1 ∑ n ( y i − p i ) X i

其中

p i = e X i ⊤ β 1 + e X i ⊤ β = 1 1 + e − X i ⊤ β p_i=\frac{e^{X_i^{\top} \beta}}{1+e^{X_i^{\top} \beta}}=\frac{1}{1+e^{-X_i^{\top} \beta}}

p i = 1 + e X i ⊤ β e X i ⊤ β = 1 + e − X i ⊤ β 1

我们用这个梯度更新 β \beta β l ( β ) l(\beta) l ( β )

β ( t + 1 ) = β ( t ) + γ t ∑ i = 1 n ( y i − p i ) X i \beta^{(t+1)}=\beta^{(t)}+\gamma_t \sum_{i=1}^n\left(y_i-p_i\right) X_i

β ( t + 1 ) = β ( t ) + γ t i = 1 ∑ n ( y i − p i ) X i

可以看出,该算法从错误 y i − p i y_i - p_i y i − p i

1.14 Langevin

在梯度下降算法中,加入随机噪声扰动,有利于跳出局部最优解,这就是 Langevin 动力学.

Brownian motion, Δ t \sqrt{\Delta t} Δ t

设 X t X_t X t t t t β \beta β

X t + Δ t = X t + σ ε t Δ t X_{t+\Delta t}=X_t+\sigma \varepsilon_t \sqrt{\Delta t}

X t + Δ t = X t + σ ε t Δ t

其中 ε t ∼ N ( 0 , 1 ) \varepsilon_t \sim N(0, 1) ε t ∼ N ( 0 , 1 ) X 0 = x X_0 = x X 0 = x [ 0 , t ] [0, t] [ 0 , t ] n n n Δ t = t / n \Delta t = t / n Δ t = t / n

X t = x + ∑ i = 1 n σ ε i t n = x + σ t 1 n ∑ i = 1 n ε i ∼ N ( x , σ 2 t I ) X_t=x+\sum_{i=1}^n \sigma \varepsilon_i \sqrt{\frac{t}{n}}=x+\sigma \sqrt{t} \frac{1}{\sqrt{n}} \sum_{i=1}^n \varepsilon_i \sim \mathrm{~N}\left(x, \sigma^2 t \mathbf{I}\right)

X t = x + i = 1 ∑ n σ ε i n t = x + σ t n 1 i = 1 ∑ n ε i ∼ N ( x , σ 2 t I )

Langevin: energy and entropy

对于描述式模型,我们采样 p θ ( X ) p_\theta(X) p θ ( X )

X ( t + 1 ) = X ( t ) + η ⋅ ∂ ∂ X f θ ( X ) + λ ε X^{(t+1)} = X^{(t)} + \eta \cdot \frac{\partial}{\partial X} f_\theta(X) + \lambda \varepsilon

X ( t + 1 ) = X ( t ) + η ⋅ ∂ X ∂ f θ ( X ) + λ ε

对于生成式模型,我们采样从 p θ ( h i ∣ X i ) p_\theta(h_i | X_i) p θ ( h i ∣ X i ) h i h_i h i

h ( t + 1 ) = h ( t ) + η ⋅ ∂ ∂ h p θ ( h , X i ) + λ ε h^{(t+1)} = h^{(t)} + \eta \cdot \frac{\partial}{\partial h} p_\theta(h, X_i) + \lambda \varepsilon

h ( t + 1 ) = h ( t ) + η ⋅ ∂ h ∂ p θ ( h , X i ) + λ ε

1.17 Linear Discriminant Analysis (LDA)

LDA 的目标是学习一个线性分类器,将 X i X_i X i z = X i ⊤ β z = X_i^\top \beta z = X i ⊤ β

设所有样本为 Ω \Omega Ω Ω + \Omega^+ Ω + Ω − \Omega^- Ω −

∀ X i ∈ Ω + , p ( X i ∣ y = + 1 ) ∼ N ( μ + , Σ + ) \forall X_i \in \Omega^{+}, p\left(X_i | y=+1\right) \sim \mathrm{N}\left(\mu^{+}, \Sigma^{+}\right) ∀ X i ∈ Ω + , p ( X i ∣ y = + 1 ) ∼ N ( μ + , Σ + ) ∀ X i ∈ Ω − , p ( X i ∣ y = − 1 ) ∼ N ( μ − , Σ − ) \forall X_i \in \Omega^{-}, p\left(X_i | y=-1\right) \sim \mathrm{N}\left(\mu^{-}, \Sigma^{-}\right) ∀ X i ∈ Ω − , p ( X i ∣ y = − 1 ) ∼ N ( μ − , Σ − )

那么类间方差为:

σ between 2 = ( E i ∈ Ω + [ X i ⊤ β ] − E i ∈ Ω − [ X i ⊤ β ] ) 2 = ( E i ∈ Ω + [ X i ⊤ ] β − E i ∈ Ω − [ X i ⊤ ] β ) 2 = ( ( μ + ) ⊤ β − ( μ − ) ⊤ β ) 2 = [ ( μ + − μ − ) ⊤ β ] 2 \begin{aligned}

\sigma_{\text {between }}^2 & =\left(\mathbb{E}_{i \in \Omega^{+}}[X_i^{\top} \beta]-\mathbb{E}_{i \in \Omega^{-}}[X_i^{\top} \beta]\right)^2 \\

& =\left(\mathbb{E}_{i \in \Omega^{+}}[X_i^{\top}] \beta-\mathbb{E}_{i \in \Omega^{-}}[X_i^{\top}] \beta\right)^2 \\

& =((\mu^{+})^{\top} \beta-(\mu^{-})^{\top} \beta)^2 \\

& =\left[(\mu^{+}-\mu^{-})^{\top} \beta\right]^2

\end{aligned}

σ between 2 = ( E i ∈ Ω + [ X i ⊤ β ] − E i ∈ Ω − [ X i ⊤ β ] ) 2 = ( E i ∈ Ω + [ X i ⊤ ] β − E i ∈ Ω − [ X i ⊤ ] β ) 2 = (( μ + ) ⊤ β − ( μ − ) ⊤ β ) 2 = [ ( μ + − μ − ) ⊤ β ] 2

类内方差为:

σ within 2 = n pos σ pos 2 + n neg σ neg 2 , n pos = ∣ Ω + ∣ , n neg = ∣ Ω − ∣ σ pos 2 = E i ∈ Ω + [ ( X i ⊤ β − E i ′ ∈ Ω + [ X i ′ ⊤ β ] ) 2 ] = E i ∈ Ω + [ ( β ⊤ X i − E i ′ ∈ Ω + [ β ⊤ X i ′ ] ) ( X i ⊤ β − E i ′ ∈ Ω + [ X i ′ ⊤ β ] ) ] = E i ∈ Ω + [ β ⊤ ( X i − E i ′ ∈ Ω + [ X i ′ ] ) ( X i ⊤ − E i ′ ∈ Ω + [ X i ′ ⊤ ] ) β ] = β ⊤ E i ∈ Ω + [ ( X i − E i ′ ∈ Ω + [ X i ′ ] ) ( X i ⊤ − E i ′ ∈ Ω + [ X i ′ ⊤ ] ) ] β = β ⊤ Σ + β σ neg 2 = β ⊤ Σ − β \begin{aligned}

\sigma_{\text {within }}^2 & =n_{\text {pos }} \sigma_{\text {pos }}^2+n_{\text {neg }} \sigma_{\text {neg }}^2, \quad n_{\text {pos }}=\left|\Omega^{+}\right|, n_{\text {neg }}=\left|\Omega^{-}\right| \\

\sigma_{\text {pos }}^2 & =\mathbb{E}_{i \in \Omega^{+}}\left[\left(X_i^{\top} \beta-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[X_{i^{\prime}}^{\top} \beta]\right)^2\right] \\

& =\mathbb{E}_{i \in \Omega^{+}}\left[\left(\beta^{\top} X_i-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[\beta^{\top} X_{i^{\prime}}]\right)\left(X_i^{\top} \beta-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[X_{i^{\prime}}^{\top} \beta]\right)\right] \\

& =\mathbb{E}_{i \in \Omega^{+}}\left[\beta^{\top}\left(X_i-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[X_{i^{\prime}}]\right)\left(X_i^{\top}-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[X_{i^{\prime}}^{\top}]\right) \beta\right] \\

& =\beta^{\top} \mathbb{E}_{i \in \Omega^{+}}\left[\left(X_i-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[X_{i^{\prime}}]\right)\left(X_i^{\top}-\mathbb{E}_{i^{\prime} \in \Omega^{+}}[X_{i^{\prime}}^{\top}\right]\right)] \beta \\

& =\beta^{\top} \Sigma^{+} \beta \\

\sigma_{\text {neg }}^2 & =\beta^{\top} \Sigma^{-} \beta

\end{aligned}

σ within 2 σ pos 2 σ neg 2 = n pos σ pos 2 + n neg σ neg 2 , n pos = Ω + , n neg = Ω − = E i ∈ Ω + [ ( X i ⊤ β − E i ′ ∈ Ω + [ X i ′ ⊤ β ] ) 2 ] = E i ∈ Ω + [ ( β ⊤ X i − E i ′ ∈ Ω + [ β ⊤ X i ′ ] ) ( X i ⊤ β − E i ′ ∈ Ω + [ X i ′ ⊤ β ] ) ] = E i ∈ Ω + [ β ⊤ ( X i − E i ′ ∈ Ω + [ X i ′ ] ) ( X i ⊤ − E i ′ ∈ Ω + [ X i ′ ⊤ ] ) β ] = β ⊤ E i ∈ Ω + [ ( X i − E i ′ ∈ Ω + [ X i ′ ] ) ( X i ⊤ − E i ′ ∈ Ω + [ X i ′ ⊤ ] ) ] β = β ⊤ Σ + β = β ⊤ Σ − β

从而优化目标是最大化

S = σ between 2 σ within 2 = [ ( μ + − μ − ) ⊤ β ] 2 n p o s β ⊤ Σ + β + n n e g β ⊤ Σ − β = ( β ⊤ ( μ + − μ − ) ) ( ( μ + − μ − ) ⊤ β ) β ⊤ ( n p o s Σ + + n n e g Σ − ) β = β ⊤ S B β β ⊤ S W β \begin{aligned}

S & =\frac{\sigma_{\text {between }}^2}{\sigma_{\text {within }}^2} \\

& =\frac{[(\mu^{+}-\mu^{-})^{\top} \beta]^2}{n_{\mathrm{pos}} \beta^{\top} \Sigma^{+} \beta+n_{\mathrm{neg}} \beta^{\top} \Sigma^{-} \beta} \\

& =\frac{(\beta^{\top}(\mu^{+}-\mu^{-}))((\mu^{+}-\mu^{-})^{\top} \beta)}{\beta^{\top}(n_{\mathrm{pos}} \Sigma^{+}+n_{\mathrm{neg}} \Sigma^{-}) \beta} \\

& =\frac{\beta^{\top} S_B \beta}{\beta^{\top} S_W \beta}

\end{aligned}

S = σ within 2 σ between 2 = n pos β ⊤ Σ + β + n neg β ⊤ Σ − β [( μ + − μ − ) ⊤ β ] 2 = β ⊤ ( n pos Σ + + n neg Σ − ) β ( β ⊤ ( μ + − μ − )) (( μ + − μ − ) ⊤ β ) = β ⊤ S W β β ⊤ S B β

其中

S B = ( μ + − μ − ) ( μ + − μ − ) ⊤ S W = n p o s Σ + + n n e g Σ − \begin{aligned}

S_B & =(\mu^{+}-\mu^{-})(\mu^{+}-\mu^{-})^{\top} \\

S_W & =n_{\mathrm{pos}} \Sigma^{+}+n_{\mathrm{neg}} \Sigma^{-}

\end{aligned}

S B S W = ( μ + − μ − ) ( μ + − μ − ) ⊤ = n pos Σ + + n neg Σ −

由于我们只关心 β \beta β β ⊤ S W β = 1 \beta^\top S_W \beta = 1 β ⊤ S W β = 1

max β β ⊤ S B β , s.t. β ⊤ S W β = 1 \max _\beta \beta^{\top} S_B \beta, \quad \text { s.t. } \quad \beta^{\top} S_W \beta=1

β max β ⊤ S B β , s.t. β ⊤ S W β = 1

使用 Lagrange 乘子法:

L = − β ⊤ S B β + λ ( β ⊤ S W β − 1 ) ⇒ ∂ L ∂ β = − 2 S B β + 2 λ S W β = 0 ⇒ S B β = λ S W β ⇒ S W − 1 S B β = λ β \begin{aligned}

& L=-\beta^{\top} S_B \beta+\lambda(\beta^{\top} S_W \beta-1) \\

\Rightarrow \quad & \frac{\partial L}{\partial \beta}=-2 S_B \beta+2 \lambda S_W \beta=0 \\

\Rightarrow \quad & S_B \beta=\lambda S_W \beta \\

\Rightarrow \quad & S_W^{-1} S_B \beta=\lambda \beta

\end{aligned}

⇒ ⇒ ⇒ L = − β ⊤ S B β + λ ( β ⊤ S W β − 1 ) ∂ β ∂ L = − 2 S B β + 2 λ S W β = 0 S B β = λ S W β S W − 1 S B β = λ β

由此看出,β \beta β S W − 1 S B S_W^{-1} S_B S W − 1 S B S B S_B S B

β ∝ S W − 1 ( μ + − μ − ) \beta \propto S_W^{-1}(\mu^{+}-\mu^{-})

β ∝ S W − 1 ( μ + − μ − )

Lecture 2: Support Vector Machines

2.1 Margin and support vectors

考虑分类问题 y i ∈ { − 1 , + 1 } y_i \in\{-1,+1\} y i ∈ { − 1 , + 1 }

y ^ = sign ( w ⊤ x + b ) = { + 1 , w ⊤ x + b ≥ 0 − 1 , w ⊤ x + b < 0 \hat{y}=\operatorname{sign}(w^{\top} x+b)= \begin{cases}+1, & w^{\top} x+b \geq 0 \\ -1, & w^{\top} x+b<0\end{cases}

y ^ = sign ( w ⊤ x + b ) = { + 1 , − 1 , w ⊤ x + b ≥ 0 w ⊤ x + b < 0

定义 margin:

γ i = y i ( w ⊤ x i + b ) \gamma_i=y_i(w^{\top} x_i+b)

γ i = y i ( w ⊤ x i + b )

γ i \gamma_i γ i γ i > 0 \gamma_i > 0 γ i > 0

max w , b min i = 1 , … , n γ i \max_{w, b}\min_{i=1, \dots, n} \gamma_i

w , b max i = 1 , … , n min γ i

但是,考虑到 γ i \gamma_i γ i w w w b b b ∥ w ∥ = 1 \Vert w \Vert = 1 ∥ w ∥ = 1

γ i = y i ( w ⊤ X i + b ) ∥ w ∥ = y i [ ( w ∥ w ∥ ) ⊤ X i + b ∥ w ∥ ] \gamma_i=\frac{y_i(w^{\top} X_i+b)}{\|w\|}= y_i\left[\left(\frac{w}{\|w\|}\right)^{\top} X_i+\frac{b}{\|w\|}\right]

γ i = ∥ w ∥ y i ( w ⊤ X i + b ) = y i [ ( ∥ w ∥ w ) ⊤ X i + ∥ w ∥ b ]

事实上,在分类正确的情况下,γ i \gamma_i γ i X i X_i X i w ⊤ x + b = 0 w^\top x+b = 0 w ⊤ x + b = 0 X i X_i X i γ i \gamma_i γ i X i − γ i w ∥ w ∥ y i X_i-\gamma_i \dfrac{w}{\|w\|} y_i X i − γ i ∥ w ∥ w y i

w ⊤ ( X i − γ i w ∥ w ∥ y i ) + b = ( w ⊤ X i + b ) − y i 2 w ⊤ ( w ⊤ X i + b ) w ∥ w ∥ 2 = ( w ⊤ X i + b ) − ( w ⊤ X i + b ) = 0 w^{\top}\left(X_i-\gamma_i \frac{w}{\|w\|} y_i\right)+b=\left(w^{\top} X_i+b\right)-y_i^2 \frac{w^{\top}\left(w^{\top} X_i+b\right) w}{\|w\|^2}=\left(w^{\top} X_i+b\right)-\left(w^{\top} X_i+b\right)=0

w ⊤ ( X i − γ i ∥ w ∥ w y i ) + b = ( w ⊤ X i + b ) − y i 2 ∥ w ∥ 2 w ⊤ ( w ⊤ X i + b ) w = ( w ⊤ X i + b ) − ( w ⊤ X i + b ) = 0

2.2 Margin classifier

假设所有样本都能分类正确. 为了最大化 margin,优化目标是:

max τ , w , b τ s.t. ∀ i , y i ( w ⊤ X i + b ) ≥ τ ∥ w ∥ = 1 \begin{aligned}

& \max _{\tau, w, b} \, \tau \\

\text { s.t. } \quad & \forall i, y_i(w^{\top} X_i+b) \geq \tau \\

& \|w\|=1

\end{aligned}

s.t. τ , w , b max τ ∀ i , y i ( w ⊤ X i + b ) ≥ τ ∥ w ∥ = 1

为了简化计算,将目标重写为:

max τ , w , b τ ∥ w ∥ s.t. ∀ i , y i ( w ⊤ X i + b ) ≥ τ \begin{aligned}

& \max _{\tau, w, b} \frac{\tau}{\|w\|} \\

\text { s.t. } \quad & \forall i, y_i\left(w^{\top} X_i+b\right) \geq \tau

\end{aligned}

s.t. τ , w , b max ∥ w ∥ τ ∀ i , y i ( w ⊤ X i + b ) ≥ τ

这时,由于我们可以缩放 w w w b b b τ = 1 \tau = 1 τ = 1

min w , b 1 2 ∥ w ∥ 2 s.t. ∀ i , y i ( w ⊤ X i + b ) ≥ 1 \begin{aligned}

& \min _{w, b} \frac{1}{2}\|w\|^2 \\

\text { s.t. } \quad & \forall i, y_i\left(w^{\top} X_i+b\right) \geq 1

\end{aligned}

s.t. w , b min 2 1 ∥ w ∥ 2 ∀ i , y i ( w ⊤ X i + b ) ≥ 1

Lagrange multipliers

考虑带等式约束的优化问题:

min w f ( w ) s.t. h k ( w ) = 0 , k = 1 , 2 , … , K \begin{aligned}

& \min _w f(w) \\

\text { s.t. } \quad & h_k(w)=0, k=1,2, \ldots, K

\end{aligned}

s.t. w min f ( w ) h k ( w ) = 0 , k = 1 , 2 , … , K

我们可以最小化

L ( w , λ ) = f ( w ) + ∑ k = 1 K λ k h k ( w ) L(w, \lambda)=f(w)+\sum_{k=1}^K \lambda_k h_k(w)

L ( w , λ ) = f ( w ) + k = 1 ∑ K λ k h k ( w )

从而要求

∂ L ∂ w = 0 , ∀ k , ∂ L ∂ λ k = 0 \frac{\partial L}{\partial w}=0, \quad \forall k, \frac{\partial L}{\partial \lambda_k}=0

∂ w ∂ L = 0 , ∀ k , ∂ λ k ∂ L = 0

加入不等式约束,即考虑一般的优化问题:

min w f ( w ) s.t. g k ( w ) ≤ 0 , k = 1 , 2 , … , K h l ( w ) = 0 , l = 1 , 2 , … , L \begin{aligned}

& \min _w f(w) \\

\text { s.t. } \quad & g_k(w) \leq 0, k=1,2, \ldots, K \\

& h_l(w)=0, l=1,2, \ldots, L

\end{aligned}

s.t. w min f ( w ) g k ( w ) ≤ 0 , k = 1 , 2 , … , K h l ( w ) = 0 , l = 1 , 2 , … , L

令

L ( w , α , β ) = f ( w ) + ∑ k = 1 K α k g k ( w ) + ∑ l = 1 L β l h l ( w ) L(w, \alpha, \beta)=f(w)+\sum_{k=1}^K \alpha_k g_k(w)+\sum_{l=1}^L \beta_l h_l(w)

L ( w , α , β ) = f ( w ) + k = 1 ∑ K α k g k ( w ) + l = 1 ∑ L β l h l ( w )

当满足

min w max α , β : α k ≥ 0 L ( w , α , β ) = max α , β : α k ≥ 0 min w L ( w , α , β ) \min _w \max _{\alpha, \beta: \alpha_k \geq 0} L(w, \alpha, \beta)=\max _{\alpha, \beta: \alpha_k \geq 0} \min _w L(w, \alpha, \beta)

w min α , β : α k ≥ 0 max L ( w , α , β ) = α , β : α k ≥ 0 max w min L ( w , α , β )

时,有 KKT 条件:

∀ p , ∂ ∂ w p L ( w , α , β ) = 0 ∀ l , ∂ ∂ β l L ( w , α , β ) = 0 ∀ k , α k g k ( w ) = 0 ∀ k , g k ( w ) ≤ 0 ∀ k , α k ≥ 0 \begin{aligned}

\forall p, \quad \frac{\partial}{\partial w_p} L(w, \alpha, \beta) & =0 \\

\forall l, \quad \frac{\partial}{\partial \beta_l} L(w, \alpha, \beta) & =0 \\

\forall k, \quad \alpha_k g_k(w) & =0 \\

\forall k, \quad g_k(w) & \leq 0 \\

\forall k, \quad \alpha_k & \geq 0

\end{aligned}

∀ p , ∂ w p ∂ L ( w , α , β ) ∀ l , ∂ β l ∂ L ( w , α , β ) ∀ k , α k g k ( w ) ∀ k , g k ( w ) ∀ k , α k = 0 = 0 = 0 ≤ 0 ≥ 0

Learning and support vectors

之前已经推出,SVM 的优化目标是:

min w , b 1 2 ∥ w ∥ 2 s.t. ∀ i , − y i ( w ⊤ X i + b ) + 1 ≤ 0 \begin{aligned}

& \min _{w, b} \frac{1}{2}\|w\|^2 \\

\text { s.t. } \quad & \forall i,-y_i\left(w^{\top} X_i+b\right)+1 \leq 0

\end{aligned}

s.t. w , b min 2 1 ∥ w ∥ 2 ∀ i , − y i ( w ⊤ X i + b ) + 1 ≤ 0

根据 KKT 条件,对于

− y i ( w ⊤ X i + b ) + 1 = 0 -y_i\left(w^{\top} X_i+b\right)+1=0

− y i ( w ⊤ X i + b ) + 1 = 0

的样本,才可能有

α i > 0 \alpha_i > 0

α i > 0

这些样本被称为 support vectors(支持向量) .

Optimization

SVM 的 Lagrangian 是:

L ( w , b , α ) = 1 2 ∥ w ∥ 2 − ∑ i = 1 n α i [ y i ( w ⊤ X i + b ) − 1 ] L(w, b, \alpha)=\frac{1}{2}\|w\|^2-\sum_{i=1}^n \alpha_i\left[y_i\left(w^{\top} X_i+b\right)-1\right]

L ( w , b , α ) = 2 1 ∥ w ∥ 2 − i = 1 ∑ n α i [ y i ( w ⊤ X i + b ) − 1 ]

由 KKT 条件,有:

∂ L ( w , b , α ) ∂ w = w − ∑ i = 1 n α i y i X i = 0 ∂ L ( w , b , α ) ∂ b = − ∑ i = 1 n α i y i = 0 \begin{aligned}

\frac{\partial L(w, b, \alpha)}{\partial w}&=w-\sum_{i=1}^n \alpha_i y_i X_i = 0 \\

\frac{\partial L(w, b, \alpha)}{\partial b}&=-\sum_{i=1}^n \alpha_i y_i = 0

\end{aligned}

∂ w ∂ L ( w , b , α ) ∂ b ∂ L ( w , b , α ) = w − i = 1 ∑ n α i y i X i = 0 = − i = 1 ∑ n α i y i = 0

从而有

{ w = ∑ i = 1 n α i y i X i ∑ i = 1 n α i y i = 0 \left\{ \begin{aligned}

& \quad w=\sum_{i=1}^n \alpha_i y_i X_i \\

& \quad \sum_{i=1}^n \alpha_i y_i=0

\end{aligned}\right.

⎩ ⎨ ⎧ w = i = 1 ∑ n α i y i X i i = 1 ∑ n α i y i = 0

代入 L ( w , b , α ) L(w, b, \alpha) L ( w , b , α )

L ( w , b , α ) = 1 2 ∥ w ∥ 2 − ∑ i = 1 n α i [ y i ( w ⊤ X i + b ) − 1 ] = 1 2 ∑ i = 1 n ∑ j = 1 n y i y j α i α j X i ⊤ X j − ∑ i = 1 n ∑ j = 1 n y i y j α i α j X i ⊤ X j − b ∑ i = 1 n α i y i + ∑ i = 1 n α i = ∑ i = 1 n α i − 1 2 ∑ i = 1 n ∑ j = 1 n y i y j α i α j X i ⊤ X j − b ∑ i = 1 n α i y i = ∑ i = 1 n α i − 1 2 ∑ i = 1 n ∑ j = 1 n y i y j α i α j X i ⊤ X j \begin{aligned}

L(w, b, \alpha) & =\frac{1}{2}\|w\|^2-\sum_{i=1}^n \alpha_i\left[y_i\left(w^{\top} X_i+b\right)-1\right] \\

& =\frac{1}{2} \sum_{i=1}^n \sum_{j=1}^n y_i y_j \alpha_i \alpha_j X_i^{\top} X_j-\sum_{i=1}^n \sum_{j=1}^n y_i y_j \alpha_i \alpha_j X_i^{\top} X_j-b \sum_{i=1}^n \alpha_i y_i+\sum_{i=1}^n \alpha_i \\

& =\sum_{i=1}^n \alpha_i-\frac{1}{2} \sum_{i=1}^n \sum_{j=1}^n y_i y_j \alpha_i \alpha_j X_i^{\top} X_j-b \sum_{i=1}^n \alpha_i y_i \\

& =\sum_{i=1}^n \alpha_i-\frac{1}{2} \sum_{i=1}^n \sum_{j=1}^n y_i y_j \alpha_i \alpha_j X_i^{\top} X_j

\end{aligned}

L ( w , b , α ) = 2 1 ∥ w ∥ 2 − i = 1 ∑ n α i [ y i ( w ⊤ X i + b ) − 1 ] = 2 1 i = 1 ∑ n j = 1 ∑ n y i y j α i α j X i ⊤ X j − i = 1 ∑ n j = 1 ∑ n y i y j α i α j X i ⊤ X j − b i = 1 ∑ n α i y i + i = 1 ∑ n α i = i = 1 ∑ n α i − 2 1 i = 1 ∑ n j = 1 ∑ n y i y j α i α j X i ⊤ X j − b i = 1 ∑ n α i y i = i = 1 ∑ n α i − 2 1 i = 1 ∑ n j = 1 ∑ n y i y j α i α j X i ⊤ X j

进而,我们可以用下式优化出 α \alpha α

max α W ( α ) , W ( α ) = ∑ i = 1 n α i − 1 2 ∑ i = 1 n ∑ j = 1 n y i y j α i α j ⟨ X i , X j ⟩ s.t. ∀ i , α i ≥ 0 ∑ i = 1 n α i y i = 0 \begin{aligned}

\max _\alpha W(\alpha), \quad W(\alpha)= & \sum_{i=1}^n \alpha_i-\frac{1}{2} \sum_{i=1}^n \sum_{j=1}^n y_i y_j \alpha_i \alpha_j\left\langle X_i, X_j\right\rangle \\

\text { s.t. } \quad & \forall i, \alpha_i \geq 0 \\

& \sum_{i=1}^n \alpha_i y_i=0

\end{aligned}

α max W ( α ) , W ( α ) = s.t. i = 1 ∑ n α i − 2 1 i = 1 ∑ n j = 1 ∑ n y i y j α i α j ⟨ X i , X j ⟩ ∀ i , α i ≥ 0 i = 1 ∑ n α i y i = 0

接着可以求出 w w w b b b

b = − 1 2 ( min i : y i = + 1 w ⊤ X i + max i : y i = − 1 w ⊤ X i ) b=-\frac{1}{2}\left(\min _{i: y_i=+1} w^{\top} X_i+\max _{i: y_i=-1} w^{\top} X_i\right)

b = − 2 1 ( i : y i = + 1 min w ⊤ X i + i : y i = − 1 max w ⊤ X i )

从而,inference 过程可以写为:

w ⊤ X i + b = ( ∑ j = 1 n α j y j X j ) ⊤ X i + b = ∑ j = 1 n α j y j ⟨ X j , X i ⟩ + b = ∑ j : α j > 0 α j y j ⟨ X j , X i ⟩ + b = ⟨ ∑ j : α j > 0 α j y j X j , X i ⟩ + b \begin{aligned}

w^{\top} X_i+b= & \left(\sum_{j=1}^n \alpha_j y_j X_j\right)^\top X_i+b \\

= & \sum_{j=1}^n \alpha_j y_j\left\langle X_j, X_i\right\rangle+b \\

= & \sum_{j: \alpha_j>0} \alpha_j y_j\left\langle X_j, X_i\right\rangle+b \\

= & \left\langle \sum_{j: \alpha_j>0} \alpha_j y_j X_j, X_i\right\rangle+b

\end{aligned}

w ⊤ X i + b = = = = ( j = 1 ∑ n α j y j X j ) ⊤ X i + b j = 1 ∑ n α j y j ⟨ X j , X i ⟩ + b j : α j > 0 ∑ α j y j ⟨ X j , X i ⟩ + b ⟨ j : α j > 0 ∑ α j y j X j , X i ⟩ + b

也就是说,只有支持向量对 w w w

2.3 Kernel-based SVM

考虑非线性分类问题,ϕ ( x ) \phi(x) ϕ ( x ) x x x

w ⊤ ϕ ( X i ) + b = ( ∑ j = 1 n α j y j ϕ ( X j ) ) ⊤ ϕ ( X i ) + b = ∑ j = 1 n α j y j ⟨ ϕ ( X j ) , ϕ ( X i ) ⟩ + b = ∑ j : α j > 0 α j y j ⟨ ϕ ( X j ) , ϕ ( X i ) ⟩ + b = ∑ j : α j > 0 α j y j K ( X j , X i ) + b \begin{aligned}

w^{\top} \phi\left(X_i\right)+b & =\left(\sum_{j=1}^n \alpha_j y_j \phi\left(X_j\right)\right)^{\top} \phi\left(X_i\right)+b \\

& =\sum_{j=1}^n \alpha_j y_j\left\langle\phi\left(X_j\right), \phi\left(X_i\right)\right\rangle+b \\

& =\sum_{j: \alpha_j>0} \alpha_j y_j\left\langle\phi\left(X_j\right), \phi\left(X_i\right)\right\rangle+b \\

& =\sum_{j: \alpha_j>0} \alpha_j y_j K\left(X_j, X_i\right)+b

\end{aligned}

w ⊤ ϕ ( X i ) + b = ( j = 1 ∑ n α j y j ϕ ( X j ) ) ⊤ ϕ ( X i ) + b = j = 1 ∑ n α j y j ⟨ ϕ ( X j ) , ϕ ( X i ) ⟩ + b = j : α j > 0 ∑ α j y j ⟨ ϕ ( X j ) , ϕ ( X i ) ⟩ + b = j : α j > 0 ∑ α j y j K ( X j , X i ) + b

其中

K ( X i , X j ) = def ϕ ( X i ) ⊤ ϕ ( X j ) K\left(X_i, X_j\right) \stackrel{\text { def }}{=} \phi\left(X_i\right)^{\top} \phi\left(X_j\right)

K ( X i , X j ) = def ϕ ( X i ) ⊤ ϕ ( X j )

被称为核(Kernel) . 也就是说,对之前求解的 SVM 结果,只要将 ⟨ X i , X j ⟩ \left\langle X_i, X_j\right\rangle ⟨ X i , X j ⟩ K ( X i , X j ) K(X_i, X_j) K ( X i , X j )

有时,K ( X i , X j ) K\left(X_i, X_j\right) K ( X i , X j ) ϕ ( X i ) \phi\left(X_i\right) ϕ ( X i ) K ( X i , X j ) K(X_i, X_j) K ( X i , X j ) ϕ ( X i ) \phi(X_i) ϕ ( X i )

K ( X i , X j ) K(X_i, X_j) K ( X i , X j )

对称性 :K ( X i , X j ) = K ( X j , X i ) K\left(X_i, X_j\right)=K\left(X_j, X_i\right) K ( X i , X j ) = K ( X j , X i )

半正定 :设核矩阵 K K K K i j = K ( X i , X j ) K_{ij} = K(X_i, X_j) K ij = K ( X i , X j ) ∀ z , z ⊤ K z ≥ 0 \forall z, z^\top K z \ge 0 ∀ z , z ⊤ Kz ≥ 0

2.4 Common kernels

RBF kernel :即 Gaussian kernel

K ( X i , X j ) = exp ( − ∥ X i − X j ∥ 2 2 σ 2 ) K\left(X_i, X_j\right)=\exp \left(-\frac{\left\|X_i-X_j\right\|^2}{2 \sigma^2}\right)

K ( X i , X j ) = exp ( − 2 σ 2 ∥ X i − X j ∥ 2 )

衡量了 X i X_i X i X j X_j X j

Simple polynomial kernel :

K ( X i , X j ) = ( X i ⊤ X j ) d K\left(X_i, X_j\right)=\left(X_i^{\top} X_j\right)^d

K ( X i , X j ) = ( X i ⊤ X j ) d

Cosine similarity kernel :

K ( X i , X j ) = X i ⊤ X j ∥ X i ∥ ∥ X j ∥ K\left(X_i, X_j\right)=\frac{X_i^{\top} X_j}{\left\|X_i\right\|\left\|X_j\right\|}

K ( X i , X j ) = ∥ X i ∥ ∥ X j ∥ X i ⊤ X j

Sigmoid kernel :

K ( X i , X j ) = tanh ( α X i ⊤ X j + c ) K\left(X_i, X_j\right)=\tanh \left(\alpha X_i^{\top} X_j+c\right)

K ( X i , X j ) = tanh ( α X i ⊤ X j + c )

2.5 With outliers

在现实生活中,我们不能保证全部正确分类. 此时的优化目标是:

min ξ , w , b 1 2 ∥ w ∥ 2 + C ∑ i = 1 n ξ i s.t. y i ( w ⊤ X i + b ) ≥ 1 − ξ i , i = 1 , 2 , … , n ξ i ≥ 0 , i = 1 , 2 , … , n \begin{aligned}

\min _{\xi, w, b} \quad& \frac{1}{2}\|w\|^2+C \sum_{i=1}^n \xi_i \\

\text { s.t. } \quad & y_i\left(w^{\top} X_i+b\right) \geq 1-\xi_i, \quad i=1,2, \ldots, n \\

& \xi_i \geq 0, \quad i=1,2, \ldots, n

\end{aligned}

ξ , w , b min s.t. 2 1 ∥ w ∥ 2 + C i = 1 ∑ n ξ i y i ( w ⊤ X i + b ) ≥ 1 − ξ i , i = 1 , 2 , … , n ξ i ≥ 0 , i = 1 , 2 , … , n

这等价于

min ξ , w , b 1 2 ∥ w ∥ 2 + C ∑ i = 1 n max ( 0 , 1 − y i ( w ⊤ X i + b ) ) \min _{\xi, w, b} \quad \frac{1}{2}\|w\|^2+C \sum_{i=1}^n \max \left(0,1-y_i\left(w^{\top} X_i+b\right)\right)

ξ , w , b min 2 1 ∥ w ∥ 2 + C i = 1 ∑ n max ( 0 , 1 − y i ( w ⊤ X i + b ) )

注意到,max ( 0 , 1 − y i ( w ⊤ X i + b ) ) \max \left(0,1-y_i\left(w^{\top} X_i+b\right)\right) max ( 0 , 1 − y i ( w ⊤ X i + b ) )

此时的 Lagrangian 是:

L ( w , b , ξ , α , β ) = 1 2 ∥ w ∥ 2 + C ∑ i = 1 n ξ i − ∑ i = 1 n α i [ y i ( w ⊤ X i + b ) − 1 + ξ i ] − ∑ i = 1 n β i ξ i L(w, b, \xi, \alpha, \beta)=\frac{1}{2}\|w\|^2+C \sum_{i=1}^n \xi_i-\sum_{i=1}^n \alpha_i\left[y_i\left(w^{\top} X_i+b\right)-1+\xi_i\right]-\sum_{i=1}^n \beta_i \xi_i

L ( w , b , ξ , α , β ) = 2 1 ∥ w ∥ 2 + C i = 1 ∑ n ξ i − i = 1 ∑ n α i [ y i ( w ⊤ X i + b ) − 1 + ξ i ] − i = 1 ∑ n β i ξ i

目标是:

min w , b , ξ : ξ i ≥ 0 max α , β : α i ≥ 0 , β i ≥ 0 L ( w , b , ξ , α , β ) \min _{w, b, \xi: \xi_i \geq 0} \max _{\alpha, \beta: \alpha_i \geq 0, \beta_i \geq 0} L(w, b, \xi, \alpha, \beta)

w , b , ξ : ξ i ≥ 0 min α , β : α i ≥ 0 , β i ≥ 0 max L ( w , b , ξ , α , β )

由

∂ L ( w , b , ξ , α , β ) ∂ w = 0 , ∂ L ( w , b , ξ , α , β ) ∂ b = 0 \frac{\partial L(w, b, \xi, \alpha, \beta)}{\partial w}=0, \quad\frac{\partial L(w, b, \xi, \alpha, \beta)}{\partial b}=0

∂ w ∂ L ( w , b , ξ , α , β ) = 0 , ∂ b ∂ L ( w , b , ξ , α , β ) = 0

可以得到优化 α \alpha α

max α W ( α ) , W ( α ) = ∑ i = 1 n α i − 1 2 ∑ i = 1 n ∑ j = 1 n y i y j α i α j ⟨ X i , X j ⟩ s.t. ∀ i , 0 ≤ α i ≤ C ∑ i = 1 n α i y i = 0 \begin{aligned}

\max _\alpha W(\alpha), \quad W(\alpha)= & \sum_{i=1}^n \alpha_i-\frac{1}{2} \sum_{i=1}^n \sum_{j=1}^n y_i y_j \alpha_i \alpha_j\left\langle X_i, X_j\right\rangle \\

\text { s.t. } \quad & \forall i, 0 \leq \alpha_i \leq C \\

& \sum_{i=1}^n \alpha_i y_i=0

\end{aligned}

α max W ( α ) , W ( α ) = s.t. i = 1 ∑ n α i − 2 1 i = 1 ∑ n j = 1 ∑ n y i y j α i α j ⟨ X i , X j ⟩ ∀ i , 0 ≤ α i ≤ C i = 1 ∑ n α i y i = 0

KKT 条件是:

α i = 0 ⇒ y i ( w ⊤ X i + b ) > 1 α i = C ⇒ y i ( w ⊤ X i + b ) < 1 0 < α i < C ⇒ y i ( w ⊤ X i + b ) = 1 \begin{aligned}

\alpha_i=0 & \Rightarrow y_i\left(w^{\top} X_i+b\right)>1 \\

\alpha_i=C & \Rightarrow y_i\left(w^{\top} X_i+b\right)<1 \\

0<\alpha_i<C & \Rightarrow y_i\left(w^{\top} X_i+b\right)=1

\end{aligned}

α i = 0 α i = C 0 < α i < C ⇒ y i ( w ⊤ X i + b ) > 1 ⇒ y i ( w ⊤ X i + b ) < 1 ⇒ y i ( w ⊤ X i + b ) = 1

Lecture 3: Kernels and Regularized Learning

3.1 Over-fitting & under-fitting

training set

validation set

Performance

error too large

irrelevant

Underfitting

error small

error too large

Overfitting

error small

error small

Ideal

3.2 Ridge Regression

为了防止过拟合,Ridge regression 引入惩罚项 λ ∥ β ∥ 2 \lambda\|\beta\|^2 λ ∥ β ∥ 2 λ > 0 \lambda > 0 λ > 0

ℓ ( β ) = ∥ Y − X β ∥ 2 + λ ∥ β ∥ 2 \ell(\beta)=\|Y-X \beta\|^2+\lambda\|\beta\|^2

ℓ ( β ) = ∥ Y − Xβ ∥ 2 + λ ∥ β ∥ 2

对损失求导,有:

ℓ ( β ) = ∥ Y − X β ∥ 2 + λ ∥ β ∥ 2 = ( Y − X β ) ⊤ ( Y − X β ) + λ β ⊤ β \ell(\beta)=\|Y-X \beta\|^2+\lambda\|\beta\|^2=(Y-X \beta)^{\top}(Y-X \beta)+\lambda \beta^{\top} \beta

ℓ ( β ) = ∥ Y − Xβ ∥ 2 + λ ∥ β ∥ 2 = ( Y − Xβ ) ⊤ ( Y − Xβ ) + λ β ⊤ β

0 = ∂ ℓ ( β ) ∂ β ∣ β = β ^ λ = − 2 X ⊤ ( Y − X β ^ λ ) + 2 λ β ^ λ 0=\left.\frac{\partial \ell(\beta)}{\partial \beta}\right|_{\beta=\hat{\beta}_\lambda}=-2 X^{\top}\left(Y-X \hat{\beta}_\lambda\right)+2 \lambda \hat{\beta}_\lambda

0 = ∂ β ∂ ℓ ( β ) β = β ^ λ = − 2 X ⊤ ( Y − X β ^ λ ) + 2 λ β ^ λ

从而得到 β \beta β

β ^ λ = ( X ⊤ X + λ I p ) − 1 X ⊤ Y \hat{\beta}_\lambda=\left(X^{\top} X+\lambda I_p\right)^{-1} X^{\top} Y

β ^ λ = ( X ⊤ X + λ I p ) − 1 X ⊤ Y

3.3 Kernel Regression

将 Ridge regression 中的 x x x ϕ ( x ) \phi(x) ϕ ( x ) K ( x , x i ) = ϕ ( x ) ⊤ ϕ ( x i ) K(x, x_i) = \phi(x)^\top \phi(x_i) K ( x , x i ) = ϕ ( x ) ⊤ ϕ ( x i )

在 inference 时,有:

f ( x ) = ϕ ( x ) ⊤ β f(x) = \phi(x)^\top \beta

f ( x ) = ϕ ( x ) ⊤ β

可以证明(见 3.11 节),

β = ∑ i c i ϕ ( x i ) \beta=\sum_i c_i \phi\left(x_i\right)

β = i ∑ c i ϕ ( x i )

从而

f ( x ) = ∑ i = 1 n c i K ( x , x i ) f(x)=\sum_{i=1}^n c_i K\left(x, x_i\right)

f ( x ) = i = 1 ∑ n c i K ( x , x i )

因此,目标就是要最小化:

l ( c ) = ∑ i = 1 n ∥ y i − ∑ j = 1 n c j K ( x i , x j ) ∥ 2 + λ ∑ i , j c i c j K ( x i , x j ) l(c) = \sum_{i=1}^n\|y_i-\sum_{j=1}^n c_j K\left(x_i, x_j\right)\|^2+\lambda \sum_{i, j} c_i c_j K\left(x_i, x_j\right)

l ( c ) = i = 1 ∑ n ∥ y i − j = 1 ∑ n c j K ( x i , x j ) ∥ 2 + λ i , j ∑ c i c j K ( x i , x j )

令 K i j = K ( x i , x j ) K_{ij} = K(x_i, x_j) K ij = K ( x i , x j )

ℓ ( c ) = ∑ i = 1 n ∥ y i − ∑ j = 1 n c j K i j ∥ 2 + λ ∑ i , j c i c j K i j = ∥ Y − K c ∥ 2 + λ c ⊤ K c \ell(c)=\sum_{i=1}^n\|y_i-\sum_{j=1}^n c_j K_{i j}\|^2+\lambda \sum_{i, j} c_i c_j K_{i j}=\|Y-K c\|^2+\lambda c^{\top} K c

ℓ ( c ) = i = 1 ∑ n ∥ y i − j = 1 ∑ n c j K ij ∥ 2 + λ i , j ∑ c i c j K ij = ∥ Y − Kc ∥ 2 + λ c ⊤ Kc

其中惩罚项:

c ⊤ K c = ∑ i , j c i c j K ( x i , x j ) = ∑ i , j c i c j ϕ ( x i ) ⊤ ϕ ( x j ) = ( ∑ i c i ϕ ( x i ) ) ⊤ ( ∑ i c i ϕ ( x i ) ) = ∥ β ∥ 2 \begin{aligned}

c^{\top} K c & =\sum_{i, j} c_i c_j K\left(x_i, x_j\right) \\

& =\sum_{i, j} c_i c_j \phi\left(x_i\right)^{\top} \phi\left(x_j\right) \\

& =\left(\sum_i c_i \phi\left(x_i\right)\right)^{\top}\left(\sum_i c_i \phi\left(x_i\right)\right) \\

& =\|\beta\|^2

\end{aligned}

c ⊤ Kc = i , j ∑ c i c j K ( x i , x j ) = i , j ∑ c i c j ϕ ( x i ) ⊤ ϕ ( x j ) = ( i ∑ c i ϕ ( x i ) ) ⊤ ( i ∑ c i ϕ ( x i ) ) = ∥ β ∥ 2

对损失求导,有:

ℓ ( c ) = ∥ Y − K c ∥ 2 + λ c ⊤ K c = ( Y − K c ) ⊤ ( Y − K c ) + λ c ⊤ K c 0 = ∂ ℓ ( c ) ∂ c ∣ c = c ^ λ = − 2 K ⊤ ( Y − K c ^ λ ) + 2 λ K c ^ λ \begin{gathered}

\ell(c)=\|Y-K c\|^2+\lambda c^{\top} K c=(Y-K c)^{\top}(Y-K c)+\lambda c^{\top} K c \\

0=\left.\frac{\partial \ell(c)}{\partial c}\right|_{c=\hat{c}_\lambda}=-2 K^{\top}\left(Y-K \hat{c}_\lambda\right)+2 \lambda K \hat{c}_\lambda

\end{gathered}

ℓ ( c ) = ∥ Y − Kc ∥ 2 + λ c ⊤ Kc = ( Y − Kc ) ⊤ ( Y − Kc ) + λ c ⊤ Kc 0 = ∂ c ∂ ℓ ( c ) c = c ^ λ = − 2 K ⊤ ( Y − K c ^ λ ) + 2 λ K c ^ λ

从而得到 c c c

c ^ λ = ( K + λ I n ) − 1 Y \hat{c}_\lambda=\left(K+\lambda I_n\right)^{-1} Y

c ^ λ = ( K + λ I n ) − 1 Y

3.4 Spline Regression

设 x ∈ R x \in \mathbb{R} x ∈ R

f ( x ) = α 0 + ∑ j = 1 p α j max ( 0 , x − k j ) f(x)=\alpha_0+\sum_{j=1}^p \alpha_j \max \left(0, x-k_j\right)

f ( x ) = α 0 + j = 1 ∑ p α j max ( 0 , x − k j )

从而最小化的目标是:

∑ i = 1 n ∥ y i − α 0 − ∑ j = 1 p α j max ( 0 , x i − k j ) ∥ 2 + λ ∑ j = 1 p α j 2 \sum_{i=1}^n\|y_i-\alpha_0-\sum_{j=1}^p \alpha_j \max \left(0, x_i-k_j\right)\|^2+\lambda \sum_{j=1}^p \alpha_j^2

i = 1 ∑ n ∥ y i − α 0 − j = 1 ∑ p α j max ( 0 , x i − k j ) ∥ 2 + λ j = 1 ∑ p α j 2

也可将拟合误差写成:

y i − α 0 − ∑ j = 1 p α j max ( 0 , x i − k j ) = y i − [ 1 , max ( 0 , x i − k 1 ) , max ( 0 , x i − k 2 ) , … , max ( 0 , x i − k p ) ] α y_i-\alpha_0-\sum_{j=1}^p \alpha_j \max \left(0, x_i-k_j\right)=y_i-\left[1, \max \left(0, x_i-k_1\right), \max \left(0, x_i-k_2\right), \ldots, \max \left(0, x_i-k_p\right)\right] \alpha

y i − α 0 − j = 1 ∑ p α j max ( 0 , x i − k j ) = y i − [ 1 , max ( 0 , x i − k 1 ) , max ( 0 , x i − k 2 ) , … , max ( 0 , x i − k p ) ] α

其中

α = [ α 0 , α 1 , α 2 , … , α p ] ⊤ . \alpha=\left[\alpha_0, \alpha_1, \alpha_2, \ldots, \alpha_p\right]^{\top}.

α = [ α 0 , α 1 , α 2 , … , α p ] ⊤ .

Relations to the Ridge regression

令

X ~ i j = max ( 0 , x i − k j ) Z = [ 1 n X ~ ] D = diag ( 0 , 1 , … , 1 ) \begin{aligned}

\widetilde{X}_{i j} & =\max \left(0, x_i-k_j\right) \\

Z & =\left[1_n ~ \widetilde{X}\right] \\

D & =\operatorname{diag}(0,1, \ldots, 1)

\end{aligned}

X ij Z D = max ( 0 , x i − k j ) = [ 1 n X ] = diag ( 0 , 1 , … , 1 )

那么目标函数可以写作:

ℓ ( α ) = ∥ Y − Z α ∥ 2 + λ ∥ D α ∥ 2 \ell(\alpha)=\|Y-Z \alpha\|^2+\lambda\|D \alpha\|^2

ℓ ( α ) = ∥ Y − Z α ∥ 2 + λ ∥ D α ∥ 2

解得 α \alpha α

α ^ λ = ( Z ⊤ Z + λ D ) − 1 Z ⊤ Y \hat{\alpha}_\lambda=\left(Z^{\top} Z+\lambda D\right)^{-1} Z^{\top} Y

α ^ λ = ( Z ⊤ Z + λ D ) − 1 Z ⊤ Y

Relations to the Kernel regression

将 k j k_j k j x j x_j x j K ( x i , x j ) = max ( 0 , x i − x j ) K\left(x_i, x_j\right)=\max \left(0, x_i-x_j\right) K ( x i , x j ) = max ( 0 , x i − x j )

f ^ ( x ) = ∑ j = 1 n α ^ j ⟨ x , x j ⟩ = ∑ j = 1 n α ^ j K ( x , x j ) \hat{f}(x)=\sum_{j=1}^n \hat{\alpha}_j\left\langle x, x_j\right\rangle=\sum_{j=1}^n \hat{\alpha}_j K\left(x, x_j\right)

f ^ ( x ) = j = 1 ∑ n α ^ j ⟨ x , x j ⟩ = j = 1 ∑ n α ^ j K ( x , x j )

3.5 Lasso regression

Lasso regression 的目标是优化

β ^ λ = arg min β [ 1 2 ∥ Y − X β ∥ ℓ 2 2 + λ ∥ β ∥ ℓ 1 ] \hat{\beta}_\lambda=\argmin_\beta\left[\frac{1}{2}\|\mathbf{Y}-\mathbf{X} \beta\|_{\ell_2}^2+\lambda\|\beta\|_{\ell_1}\right]

β ^ λ = β arg min [ 2 1 ∥ Y − X β ∥ ℓ 2 2 + λ ∥ β ∥ ℓ 1 ]

其中

∥ β ∥ ℓ 1 = ∑ j = 1 p ∣ β j ∣ \|\beta\|_{\ell_1}=\sum_{j=1}^p | \beta_j |

∥ β ∥ ℓ 1 = j = 1 ∑ p ∣ β j ∣

对于一般的 p p p p = 1 p = 1 p = 1

β ^ λ = { ( ⟨ Y , X ⟩ − λ ) / ∥ X ∥ ℓ 2 2 , if ⟨ Y , X ⟩ > λ ( ⟨ Y , X ⟩ + λ ) / ∥ X ∥ ℓ 2 2 , if ⟨ Y , X ⟩ < − λ 0 if ∣ ⟨ Y , X ⟩ ∣ ≤ λ \hat{\beta}_\lambda= \begin{cases}(\langle\mathbf{Y}, \mathbf{X}\rangle-\lambda) /\|\mathbf{X}\|_{\ell_2}^2, & \text { if }\langle\mathbf{Y}, \mathbf{X}\rangle>\lambda \\ (\langle\mathbf{Y}, \mathbf{X}\rangle+\lambda) /\|\mathbf{X}\|_{\ell_2}^2, & \text { if }\langle\mathbf{Y}, \mathbf{X}\rangle<-\lambda \\ 0 & \text { if }|\langle\mathbf{Y}, \mathbf{X}\rangle| \leq \lambda\end{cases}

β ^ λ = ⎩ ⎨ ⎧ (⟨ Y , X ⟩ − λ ) /∥ X ∥ ℓ 2 2 , (⟨ Y , X ⟩ + λ ) /∥ X ∥ ℓ 2 2 , 0 if ⟨ Y , X ⟩ > λ if ⟨ Y , X ⟩ < − λ if ∣ ⟨ Y , X ⟩ ∣ ≤ λ

即

β ^ λ = sign ( γ ^ ) max ( 0 , ∣ γ ^ ∣ − λ / ∥ X ∥ ℓ 2 2 ) \hat{\beta}_\lambda=\operatorname{sign}(\hat{\gamma}) \max \left(0,|\hat{\gamma}|-\lambda /\|\mathbf{X}\|_{\ell_2}^2\right)

β ^ λ = sign ( γ ^ ) max ( 0 , ∣ γ ^ ∣ − λ /∥ X ∥ ℓ 2 2 )

其中

γ ^ = ⟨ Y , X ⟩ / ∥ X ∥ ℓ 2 2 \hat{\gamma}=\langle\mathbf{Y}, \mathbf{X}\rangle /\|\mathbf{X}\|_{\ell_2}^2

γ ^ = ⟨ Y , X ⟩ /∥ X ∥ ℓ 2 2

Ridge regression 希望没有主导特征,而 Lasso regression 希望特征稀疏.

Lasso regression 有两种等价形式:

min ∥ Y − X β ∥ ℓ 2 2 / 2 subject to ∥ β ∥ ℓ 1 ≤ t \min ~ \|\mathbf{Y}-\mathbf{X} \beta\|_{\ell_2}^2 / 2 \quad \text{ subject to } \quad \|\beta\|_{\ell_1} \leq t

min ∥ Y − X β ∥ ℓ 2 2 /2 subject to ∥ β ∥ ℓ 1 ≤ t

min ∥ Y − X β ∥ ℓ 2 2 / 2 + λ ∥ β ∥ ℓ 1 \min ~ \|\mathbf{Y}-\mathbf{X} \beta\|_{\ell_2}^2 / 2+\lambda\|\beta\|_{\ell_1}

min ∥ Y − X β ∥ ℓ 2 2 /2 + λ ∥ β ∥ ℓ 1

接下来证明两种形式等价. 设两个式子有不相等的解:

β ^ λ = argmin ∥ Y − X β ∥ ℓ 2 2 / 2 + λ ∥ β ∥ ℓ 1 \hat{\beta}_\lambda=\operatorname{argmin}\|\mathbf{Y}-\mathbf{X} \beta\|_{\ell_2}^2 / 2+\lambda\|\beta\|_{\ell_1}

β ^ λ = argmin ∥ Y − X β ∥ ℓ 2 2 /2 + λ ∥ β ∥ ℓ 1

β ^ = argmin β ∥ Y − X β ∥ ℓ 2 2 / 2 s.t. ∥ β ∥ ℓ 1 ≤ t \hat{\beta}=\underset{\beta}{\operatorname{argmin}}\|\mathbf{Y}-\mathbf{X} \beta\|_{\ell_2}^2 / 2 \quad \text { s.t. } \quad\|\beta\|_{\ell_1} \leq t

β ^ = β argmin ∥ Y − X β ∥ ℓ 2 2 /2 s.t. ∥ β ∥ ℓ 1 ≤ t

令 t = ∥ β ^ λ ∥ ℓ 1 t=\|\hat{\beta}_\lambda\|_{\ell_1} t = ∥ β ^ λ ∥ ℓ 1

∥ Y − X β ^ ∥ ℓ 2 2 / 2 < ∥ Y − X β ^ λ ∥ ℓ 2 2 / 2 , ∥ β ^ ∥ ℓ 1 ≤ ∥ β ^ λ ∥ ℓ 1 \|\mathbf{Y}-\mathbf{X} \hat{\beta}\|_{\ell_2}^2 / 2<\|\mathbf{Y}-\mathbf{X} \hat{\beta}_\lambda\|_{\ell_2}^2 / 2, \quad\|\hat{\beta}\|_{\ell_1} \leq\|\hat{\beta}_\lambda\|_{\ell_1}

∥ Y − X β ^ ∥ ℓ 2 2 /2 < ∥ Y − X β ^ λ ∥ ℓ 2 2 /2 , ∥ β ^ ∥ ℓ 1 ≤ ∥ β ^ λ ∥ ℓ 1

从而

∥ Y − X β ^ ∥ ℓ 2 2 / 2 + λ ∥ β ^ ∥ ℓ 1 < ∥ Y − X β ^ λ ∥ ℓ 2 2 / 2 + λ ∥ β ^ λ ∥ ℓ 1 \|\mathbf{Y}-\mathbf{X} \hat{\beta}\|_{\ell_2}^2 / 2+\lambda\|\hat{\beta}\|_{\ell_1}<\|\mathbf{Y}-\mathbf{X} \hat{\beta}_\lambda\|_{\ell_2}^2 / 2+\lambda\|\hat{\beta}_\lambda\|_{\ell_1}

∥ Y − X β ^ ∥ ℓ 2 2 /2 + λ ∥ β ^ ∥ ℓ 1 < ∥ Y − X β ^ λ ∥ ℓ 2 2 /2 + λ ∥ β ^ λ ∥ ℓ 1

这与第一个式子矛盾,因此 β ^ λ = β ^ \hat{\beta}_\lambda = \hat{\beta} β ^ λ = β ^

3.7 Coordinate descent for Lasso solution path

高维 Lasso regression 的求解思路是:将每一个特征维度看作一维的 Lasso regression,从而用以下算法求解.

for λ = 10 a , 10 a − Δ , 10 a − 2 Δ , 10 a − 3 Δ , … , 10 b do for Feature dimension j = 1 , 2 , … , p do Compute the residual, R j = Y − ∑ k ≠ j X k β k ; Update the parameter of the j -th dimension, β j = sign ( γ ^ j ) max ( 0 , ∣ γ ^ j ∣ − λ / ∥ X ∥ ℓ 2 2 ) , where γ ^ j = ⟨ R j , X j ⟩ / ∥ X j ∥ ℓ 2 2 end end \begin{aligned}

&\textbf { for } ~\lambda=10^a, 10^{a-\Delta}, 10^{a-2 \Delta}, 10^{a-3 \Delta}, \ldots, 10^b \textbf{ ~do }\\

&\quad \textbf { for } \text{~Feature dimension } j=1,2, \ldots, p \textbf{ ~do }\\

&\quad \quad \text { Compute the residual, } \textstyle \mathbf{R}_j=\mathbf{Y}-\sum_{k \neq j} \mathbf{X}_k \beta_k \text {; }\\

&\quad \quad \text { Update the parameter of the } j \text {-th dimension, } \beta_j=\operatorname{sign}\left(\hat{\gamma}_j\right) \max \left(0,\left|\hat{\gamma}_j\right|-\lambda /\|\mathbf{X}\|_{\ell_2}^2\right) \text {, where } \hat{\gamma}_j=\left\langle\mathbf{R}_j, \mathbf{X}_j\right\rangle /\left\|\mathbf{X}_j\right\|_{\ell_2}^2\\

&\quad \textbf{ end }\\

&\textbf{ end }

\end{aligned}

for λ = 1 0 a , 1 0 a − Δ , 1 0 a − 2Δ , 1 0 a − 3Δ , … , 1 0 b do for Feature dimension j = 1 , 2 , … , p do Compute the residual, R j = Y − ∑ k = j X k β k ; Update the parameter of the j -th dimension, β j = sign ( γ ^ j ) max ( 0 , ∣ γ ^ j ∣ − λ /∥ X ∥ ℓ 2 2 ) , where γ ^ j = ⟨ R j , X j ⟩ / ∥ X j ∥ ℓ 2 2 end end

3.8 Bayesian regression

考虑最大似然估计

P ( β ∣ X , Y ) = P ( β ∣ X ) P ( Y ∣ X , β ) P ( Y ∣ X ) = P ( β ) P ( Y ∣ X , β ) ⋅ C \begin{aligned}

P(\beta | \mathbf{X}, \mathbf{Y}) & = \frac{P(\beta | \mathbf{X}) P(\mathbf{Y} | \mathbf{X}, \beta)}{P(\mathbf{Y} | \mathbf{X})} \\

& = P(\beta)P(\mathbf{Y} | \mathbf{X}, \beta) \cdot C

\end{aligned}

P ( β ∣ X , Y ) = P ( Y ∣ X ) P ( β ∣ X ) P ( Y ∣ X , β ) = P ( β ) P ( Y ∣ X , β ) ⋅ C

从而

log P ( β ∣ X , Y ) = log P ( β ) + log P ( Y ∣ X , β ) + C \log P(\beta | \mathbf{X}, \mathbf{Y}) = \log P(\beta) + \log P(\mathbf{Y} | \mathbf{X}, \beta) + C

log P ( β ∣ X , Y ) = log P ( β ) + log P ( Y ∣ X , β ) + C

设

β ∼ N ( 0 , τ 2 I p ) \beta \sim \mathrm{N}\left(0, \tau^2 \mathbf{I}_p\right)

β ∼ N ( 0 , τ 2 I p )

Y ∼ N ( X β , σ 2 I ) \mathbf{Y} \sim \mathrm{N}(\mathbf{X}\beta, \sigma^2\mathbf{I})

Y ∼ N ( X β , σ 2 I )

那么

log P ( β ∣ X , Y ) = − 1 2 σ 2 ∥ Y − X β ∥ ℓ 2 2 − 1 2 τ 2 ∥ β ∥ ℓ 2 2 + C \log P(\beta | \mathbf{X}, \mathbf{Y})=-\frac{1}{2 \sigma^2}\|\mathbf{Y}-\mathbf{X} \beta\|_{\ell_2}^2-\frac{1}{2 \tau^2}\|\beta\|_{\ell_2}^2+C

log P ( β ∣ X , Y ) = − 2 σ 2 1 ∥ Y − X β ∥ ℓ 2 2 − 2 τ 2 1 ∥ β ∥ ℓ 2 2 + C

要使似然函数最大,得到:

β ^ = ( X ⊤ X + σ 2 τ 2 I p ) − 1 X ⊤ Y \hat{\beta}=\left(\mathbf{X}^{\top} \mathbf{X}+\frac{\sigma^2}{\tau^2} \mathbf{I}_p\right)^{-1} \mathbf{X}^{\top} \mathbf{Y}

β ^ = ( X ⊤ X + τ 2 σ 2 I p ) − 1 X ⊤ Y

对应 λ = σ 2 / τ 2 \lambda=\sigma^2 / \tau^2 λ = σ 2 / τ 2

3.9 SVM and ridge logistic regression

考虑 SVM 的损失函数

loss ( β ) = ∑ i = 1 n max ( 0 , 1 − y i X i ⊤ β ) + λ 2 ∥ β ∥ 2 \operatorname{loss}(\beta)=\sum_{i=1}^n \max \left(0,1-y_i X_i^{\top} \beta\right)+\frac{\lambda}{2}\|\beta\|^2

loss ( β ) = i = 1 ∑ n max ( 0 , 1 − y i X i ⊤ β ) + 2 λ ∥ β ∥ 2

求梯度,得:

loss ′ ( β ) = − ∑ i = 1 n 1 ( y i X i ⊤ β < 1 ) y i X i + λ β \operatorname{loss}^{\prime}(\beta)=-\sum_{i=1}^n 1\left(y_i X_i^{\top} \beta<1\right) y_i X_i+\lambda \beta

loss ′ ( β ) = − i = 1 ∑ n 1 ( y i X i ⊤ β < 1 ) y i X i + λ β

该损失函数与 Ridge logistic regression 的形式类似,即:

loss ( β ) = ∑ i = 1 n log [ 1 + exp ( − y i X i ⊤ β ) ] + λ 2 ∥ β ∥ 2 \operatorname{loss}(\beta)=\sum_{i=1}^n \log \left[1+\exp \left(-y_i X_i^{\top} \beta\right)\right]+\frac{\lambda}{2}\|\beta\|^2

loss ( β ) = i = 1 ∑ n log [ 1 + exp ( − y i X i ⊤ β ) ] + 2 λ ∥ β ∥ 2

其梯度为:

loss ′ ( β ) = − ∑ i = 1 n sigmoid ( − y i X i ⊤ β ) y i X i + λ β \operatorname{loss}^{\prime}(\beta)=-\sum_{i=1}^n \operatorname{sigmoid}\left(-y_i X_i^{\top} \beta\right) y_i X_i+\lambda \beta

loss ′ ( β ) = − i = 1 ∑ n sigmoid ( − y i X i ⊤ β ) y i X i + λ β

3.10 Linear Version

若优化目标可以写作

∑ i = 1 n L ( y i ; x i ⊤ β ) + λ ∥ β ∥ 2 \sum_{i=1}^n L\left(y_i ; x_i^{\top} \beta\right)+\lambda\|\beta\|^2

i = 1 ∑ n L ( y i ; x i ⊤ β ) + λ ∥ β ∥ 2

那么解空间的形式为:

β ^ = ∑ i = 1 n α i x i \hat{\beta}=\sum_{i=1}^n \alpha_i x_i

β ^ = i = 1 ∑ n α i x i

采用反证法,设最优解

β ~ = ∑ i = 1 n α i x i + ∑ k = 1 K κ k x k \tilde{\beta}=\sum_{i=1}^n \alpha_i x_i+\sum_{k=1}^K \kappa_k x_k

β ~ = i = 1 ∑ n α i x i + k = 1 ∑ K κ k x k

其中 x k ⊥ x i x_k \perp x_i x k ⊥ x i

x i T β ~ = x i T ( ∑ j = 1 n α j x j + ∑ k = 1 K κ k x k ) = ∑ j = 1 n α j x i T x j + ∑ k = 1 K κ k x i T x k = ∑ j = 1 n α j x i T x j + ∑ k = 1 K κ k 0 = ∑ j = 1 n α j x i T x j = x i T β ^ \begin{aligned}

x_i^T \tilde{\beta} & =x_i^T\left(\sum_{j=1}^n \alpha_j x_j+\sum_{k=1}^K \kappa_k x_k\right) \\

& =\sum_{j=1}^n \alpha_j x_i^T x_j+\sum_{k=1}^K \kappa_k x_i^T x_k \\

& =\sum_{j=1}^n \alpha_j x_i^T x_j+\sum_{k=1}^K \kappa_k 0 \\

& =\sum_{j=1}^n \alpha_j x_i^T x_j \\

& =x_i^T \hat{\beta}

\end{aligned}

x i T β ~ = x i T ( j = 1 ∑ n α j x j + k = 1 ∑ K κ k x k ) = j = 1 ∑ n α j x i T x j + k = 1 ∑ K κ k x i T x k = j = 1 ∑ n α j x i T x j + k = 1 ∑ K κ k 0 = j = 1 ∑ n α j x i T x j = x i T β ^

从而

∑ i = 1 n L ( y i ; x i ⊤ β ^ ) + λ ∥ β ^ ∥ 2 ≤ ∑ i = 1 n L ( y i ; x i ⊤ β ~ ) + λ ∥ β ~ ∥ 2 \sum_{i=1}^n L(y_i ; x_i^{\top} \hat{\beta})+\lambda\|\hat{\beta}\|^2 \leq \sum_{i=1}^n L(y_i ; x_i^{\top} \tilde{\beta})+\lambda\|\tilde{\beta}\|^2

i = 1 ∑ n L ( y i ; x i ⊤ β ^ ) + λ ∥ β ^ ∥ 2 ≤ i = 1 ∑ n L ( y i ; x i ⊤ β ~ ) + λ ∥ β ~ ∥ 2

因此,β ^ \hat{\beta} β ^

3.11 Feature version

若优化目标可以写作

∑ i = 1 n L ( y i ; ϕ ( x i ) ⊤ β ) + λ ∥ β ∥ 2 \sum_{i=1}^n L(y_i ; \phi(x_i)^{\top} \beta)+\lambda\|\beta\|^2

i = 1 ∑ n L ( y i ; ϕ ( x i ) ⊤ β ) + λ ∥ β ∥ 2

那么解空间的形式为:

β ^ = ∑ i = 1 n α i ϕ ( x i ) \hat{\beta}=\sum_{i=1}^n \alpha_i \phi(x_i)

β ^ = i = 1 ∑ n α i ϕ ( x i )

证明过程与 3.10 中类似,在此省略.

3.12 Gaussian Process and Bayesian Estimation

Linear version

对于 Y = X β + ϵ Y=X \beta+\epsilon Y = Xβ + ϵ β ∼ N ( 0 , τ 2 I p ) , ϵ ∼ N ( 0 , σ 2 I n ) \beta \sim \mathrm{N}\left(0, \tau^2 I_p\right), \epsilon \sim \mathrm{N}\left(0, \sigma^2 I_n\right) β ∼ N ( 0 , τ 2 I p ) , ϵ ∼ N ( 0 , σ 2 I n ) β \beta β ϵ \epsilon ϵ β \beta β Pr [ β ∣ Y , X ] \operatorname{Pr}[\beta | Y, X] Pr [ β ∣ Y , X ]

引理 :设 X 1 X_1 X 1 X 2 X_2 X 2 [ X 1 X 2 ] ∼ N ( [ μ 1 μ 2 ] , [ Σ 11 Σ 12 Σ 21 Σ 22 ] ) \begin{bmatrix}

X_1 \\

X_2

\end{bmatrix} \sim N\left(\begin{bmatrix}

\mu_1 \\

\mu_2

\end{bmatrix},\begin{bmatrix}

\Sigma_{11} & \Sigma_{12} \\

\Sigma_{21} & \Sigma_{22}

\end{bmatrix}\right)

[ X 1 X 2 ] ∼ N ( [ μ 1 μ 2 ] , [ Σ 11 Σ 21 Σ 12 Σ 22 ] )

则Pr [ X 2 ∣ X 1 ] ∼ N ( μ 2 + Σ 21 Σ 11 − 1 ( X 1 − μ 1 ) , Σ 22 − Σ 21 Σ 11 − 1 Σ 12 ] \operatorname{Pr}\left[X_2 | X_1\right] \sim N\left(\mu_2+\Sigma_{21} \Sigma_{11}^{-1}\left(X_1-\mu_1\right), \Sigma_{22}-\Sigma_{21} \Sigma_{11}^{-1} \Sigma_{12}\right]

Pr [ X 2 ∣ X 1 ] ∼ N ( μ 2 + Σ 21 Σ 11 − 1 ( X 1 − μ 1 ) , Σ 22 − Σ 21 Σ 11 − 1 Σ 12 ]

首先,求解 Y = X β + ϵ Y = X\beta + \epsilon Y = Xβ + ϵ

E [ Y ] = X E [ β ] + E [ ϵ ] = 0 \operatorname{E}[Y]=X \operatorname{E}[\beta]+\operatorname{E}[\epsilon]=0

E [ Y ] = X E [ β ] + E [ ϵ ] = 0

方差为:

Var [ Y ] = Var [ X β ] + Var [ ϵ ] = X Var [ β ] X ⊤ + σ 2 I n = τ 2 X X ⊤ + σ 2 I n \begin{aligned}

\operatorname{Var}[Y] & =\operatorname{Var}[X \beta]+\operatorname{Var}[\epsilon] \\

& =X \operatorname{Var}[\beta] X^\top+\sigma^2 I_n \\

& =\tau^2 X X^\top+\sigma^2 I_n

\end{aligned}

Var [ Y ] = Var [ Xβ ] + Var [ ϵ ] = X Var [ β ] X ⊤ + σ 2 I n = τ 2 X X ⊤ + σ 2 I n

因此,Y Y Y

Y ∼ N ( 0 , τ 2 X X ⊤ + σ 2 I n ) Y \sim N\left(0, \tau^2 X X^\top+\sigma^2 I_n\right)

Y ∼ N ( 0 , τ 2 X X ⊤ + σ 2 I n )

再求 Y Y Y β \beta β

Cov ( Y , β ) = E i [ ( Y i − E j [ Y j ] ) ( β i − E j [ β j ] ) ⊤ ] = E i [ ( X β i + ϵ i − E j [ X β j + ϵ j ] ) ( β i − E j [ β j ] ) ⊤ ] = E i [ ( X β i + ϵ i − E j [ X β j ] − E j [ ϵ j ] ) ( β i ) ⊤ ] = E i [ ( X β i + ϵ i − E j [ X β j ] ) β i ⊤ ] = E i [ X β i β i ⊤ ] + E i [ ϵ i β i ⊤ ] − E i [ E j [ X β j ] β i ⊤ ] = E i [ X β i β i ⊤ ] + 0 − E i [ 0 β i ⊤ ] = X E i [ β i β i ⊤ ] = X ( τ 2 I p ) = τ 2 X \begin{aligned}

\operatorname{Cov}(Y, \beta) & =E_i\left[\left(Y_i-E_j\left[Y_j\right]\right)\left(\beta_i-E_j\left[\beta_j\right]\right)^{\top}\right] \\

& =E_i\left[\left(X \beta_i+\epsilon_i-E_j\left[X \beta_j+\epsilon_j\right]\right)\left(\beta_i-E_j\left[\beta_j\right]\right)^{\top}\right] \\

& =E_i\left[\left(X \beta_i+\epsilon_i-E_j\left[X \beta_j\right]-E_j\left[\epsilon_j\right]\right)\left(\beta_i\right)^{\top}\right] \\

& =E_i\left[\left(X \beta_i+\epsilon_i-E_j\left[X \beta_j\right]\right) \beta_i^{\top}\right] \\

& =E_i\left[X \beta_i \beta_i^{\top}\right]+E_i\left[\epsilon_i \beta_i^{\top}\right]-E_i\left[E_j\left[X \beta_j\right] \beta_i^{\top}\right] \\

& =E_i\left[X \beta_i \beta_i^{\top}\right]+0-E_i\left[0 \beta_i^{\top}\right] \\

& =X E_i\left[\beta_i \beta_i^{\top}\right] \\

& =X\left(\tau^2 I_p\right) \\

& =\tau^2 X

\end{aligned}

Cov ( Y , β ) = E i [ ( Y i − E j [ Y j ] ) ( β i − E j [ β j ] ) ⊤ ] = E i [ ( X β i + ϵ i − E j [ X β j + ϵ j ] ) ( β i − E j [ β j ] ) ⊤ ] = E i [ ( X β i + ϵ i − E j [ X β j ] − E j [ ϵ j ] ) ( β i ) ⊤ ] = E i [ ( X β i + ϵ i − E j [ X β j ] ) β i ⊤ ] = E i [ X β i β i ⊤ ] + E i [ ϵ i β i ⊤ ] − E i [ E j [ X β j ] β i ⊤ ] = E i [ X β i β i ⊤ ] + 0 − E i [ 0 β i ⊤ ] = X E i [ β i β i ⊤ ] = X ( τ 2 I p ) = τ 2 X

因此,

[ Y β ] ∼ N ( [ 0 0 ] , [ τ 2 X X T + σ 2 I n τ 2 X τ 2 X T τ 2 I p ] ) \begin{bmatrix}

Y \\

\beta

\end{bmatrix} \sim N\left(\begin{bmatrix}

0 \\

0

\end{bmatrix},\begin{bmatrix}

\tau^2 X X^T+\sigma^2 I_n & \tau^2 X \\

\tau^2 X^T & \tau^2 I_p

\end{bmatrix}\right)

[ Y β ] ∼ N ( [ 0 0 ] , [ τ 2 X X T + σ 2 I n τ 2 X T τ 2 X τ 2 I p ] )

从而

Pr [ β ∣ Y , X ] = N ( τ 2 X T ( τ 2 X X T + σ 2 I n ) − 1 Y , τ 2 I p − τ 2 X T ( τ 2 X X T + σ 2 I n ) − 1 τ 2 X ) \begin{equation}

\operatorname{Pr}[\beta | Y, X]=N\left(\tau^2 X^T\left(\tau^2 X X^T+\sigma^2 I_n\right)^{-1} Y, \tau^2 I_p-\tau^2 X^T\left(\tau^2 X X^T+\sigma^2 I_n\right)^{-1} \tau^2 X\right)

\end{equation}

Pr [ β ∣ Y , X ] = N ( τ 2 X T ( τ 2 X X T + σ 2 I n ) − 1 Y , τ 2 I p − τ 2 X T ( τ 2 X X T + σ 2 I n ) − 1 τ 2 X )

可以验证,该后验的均值与 λ = σ 2 / τ 2 \lambda=\sigma^2 / \tau^2 λ = σ 2 / τ 2 β \beta β

Feature version

对于 y i = ϕ ( x i ) ⊤ β + ϵ i y_i=\phi\left(x_i\right)^{\top} \beta+\epsilon_i y i = ϕ ( x i ) ⊤ β + ϵ i Pr [ β ∣ Y , X ] \operatorname{Pr}[\beta | Y, X] Pr [ β ∣ Y , X ]

ϕ ( X ) = [ ϕ ( x 1 ) ⊤ ϕ ( x 2 ) ⊤ … ϕ ( x n ) ⊤ ] n × d \phi(X)=\begin{bmatrix}

\phi\left(x_1\right)^{\top} \\

\phi\left(x_2\right)^{\top} \\

\ldots \\

\phi\left(x_n\right)^{\top}

\end{bmatrix}_{n \times d}

ϕ ( X ) = ϕ ( x 1 ) ⊤ ϕ ( x 2 ) ⊤ … ϕ ( x n ) ⊤ n × d

那么只需要将 (3) 式中的 X X X ϕ ( X ) \phi(X) ϕ ( X )

Pr [ β ∣ Y , X ] = N ( τ 2 ϕ ( X ) T ( τ 2 ϕ ( X ) ϕ ( X ) T + σ 2 I n ) − 1 Y , V ) \operatorname{Pr}[\beta | Y, X]=N\left(\tau^2 \phi(X)^T\left(\tau^2 \phi(X) \phi(X)^T+\sigma^2 I_n\right)^{-1} Y, V\right)

Pr [ β ∣ Y , X ] = N ( τ 2 ϕ ( X ) T ( τ 2 ϕ ( X ) ϕ ( X ) T + σ 2 I n ) − 1 Y , V )

其中

V = τ 2 I p − τ 2 ϕ ( X ) T ( τ 2 ϕ ( X ) ϕ ( X ) T + σ 2 I n ) − 1 τ 2 ϕ ( X ) V=\tau^2 I_p-\tau^2 \phi(X)^T\left(\tau^2 \phi(X) \phi(X)^T+\sigma^2 I_n\right)^{-1} \tau^2 \phi(X)

V = τ 2 I p − τ 2 ϕ ( X ) T ( τ 2 ϕ ( X ) ϕ ( X ) T + σ 2 I n ) − 1 τ 2 ϕ ( X )

不难发现,V V V Y Y Y β \beta β ϕ ( x ) \phi(x) ϕ ( x ) Y Y Y

Kernel version

设 f ( x ) = ϕ ( x ) ⊤ β f(x)=\phi(x)^{\top} \beta f ( x ) = ϕ ( x ) ⊤ β

Y = [ y 1 y 2 ⋮ y n ] = [ f ( x 1 ) f ( x 2 ) ⋮ f ( x n ) ] + ϵ Y=\begin{bmatrix}

y_1 \\

y_2 \\

\vdots \\

y_n

\end{bmatrix}=\begin{bmatrix}

f\left(x_1\right) \\

f\left(x_2\right) \\

\vdots \\

f\left(x_n\right)

\end{bmatrix}+\epsilon

Y = y 1 y 2 ⋮ y n = f ( x 1 ) f ( x 2 ) ⋮ f ( x n ) + ϵ

由于

Cov ( f ( x ) , f ( x ′ ) ) = Cov ( ϕ ( x ) T β , ϕ ( x ′ ) T β ) = τ 2 ϕ ( x ) T ϕ ( x ′ ) \operatorname{Cov}\left(f(x), f\left(x^{\prime}\right)\right)=\operatorname{Cov}\left(\phi(x)^T \beta, \phi\left(x^{\prime}\right)^T \beta\right)=\tau^2 \phi(x)^T \phi\left(x^{\prime}\right)

Cov ( f ( x ) , f ( x ′ ) ) = Cov ( ϕ ( x ) T β , ϕ ( x ′ ) T β ) = τ 2 ϕ ( x ) T ϕ ( x ′ )

令

K ( x , x ′ ) = τ 2 ϕ ( x ) T ϕ ( x ′ ) K\left(x, x^{\prime}\right) = \tau^2 \phi(x)^T \phi\left(x^{\prime}\right)

K ( x , x ′ ) = τ 2 ϕ ( x ) T ϕ ( x ′ )

那么 Y Y Y

Y ∼ N ( 0 , K + σ 2 I n ) Y \sim N\left(\mathbf{0}, \boldsymbol{K}+\sigma^2 \boldsymbol{I}_{\boldsymbol{n}}\right)

Y ∼ N ( 0 , K + σ 2 I n )

即

Y ∼ N ( [ 0 ⋮ 0 ] , [ τ 2 ϕ ( x 1 ) T ϕ ( x 1 ) + σ 2 … τ 2 ϕ ( x 1 ) T ϕ ( x n ) ⋮ ⋱ ⋮ τ 2 ϕ ( x n ) T ϕ ( x 1 ) … τ 2 ϕ ( x n ) T ϕ ( x n ) + σ 2 ] ) Y \sim N\left(\begin{bmatrix}

0 \\

\vdots \\

0

\end{bmatrix},\begin{bmatrix}

\tau^2 \phi\left(x_1\right)^T \phi\left(x_1\right)+\sigma^2 & \dots & \tau^2 \phi\left(x_1\right)^T \phi\left(x_n\right) \\

\vdots & \ddots & \vdots \\

\tau^2 \phi\left(x_n\right)^T \phi\left(x_1\right) & \ldots & \tau^2 \phi\left(x_n\right)^T \phi\left(x_n\right)+\sigma^2

\end{bmatrix}\right)

Y ∼ N 0 ⋮ 0 , τ 2 ϕ ( x 1 ) T ϕ ( x 1 ) + σ 2 ⋮ τ 2 ϕ ( x n ) T ϕ ( x 1 ) … ⋱ … τ 2 ϕ ( x 1 ) T ϕ ( x n ) ⋮ τ 2 ϕ ( x n ) T ϕ ( x n ) + σ 2

从而

[ Y f ( x 0 ) ] = N ( [ 0 0 ] , [ K + σ 2 I n K ( x , x 0 ) K ( x 0 , x ) K ( x 0 , x 0 ) ] ( n + 1 ) × ( n + 1 ) ) \begin{bmatrix}

Y \\

f\left(x_0\right)

\end{bmatrix}=N\left(\begin{bmatrix}

\mathbf{0} \\

0

\end{bmatrix},\begin{bmatrix}

\boldsymbol{K}+\sigma^2 \boldsymbol{I}_{\boldsymbol{n}} & \boldsymbol{K}\left(\boldsymbol{x}, x_0\right) \\

\boldsymbol{K}\left(x_0, \boldsymbol{x}\right) & K\left(x_0, x_0\right)

\end{bmatrix}_{(n+1) \times(n+1)}\right)

[ Y f ( x 0 ) ] = N ( [ 0 0 ] , [ K + σ 2 I n K ( x 0 , x ) K ( x , x 0 ) K ( x 0 , x 0 ) ] ( n + 1 ) × ( n + 1 ) )

因此,

Pr [ f ( x 0 ) ∣ Y , X ] ∼ N ( K ( x 0 , x ) ( K + σ 2 I n ) − 1 Y , K ( x 0 , x 0 ) − K ( x 0 , x ) ( K + σ 2 I n ) − 1 K ( x 0 , x ) T ) \operatorname{Pr}\left[f\left(x_0\right) | Y, X\right] \sim N\left(K\left(x_0, x\right)\left(K+\sigma^2 I_n\right)^{-1} Y, K\left(x_0, x_0\right)-K\left(x_0, x\right)\left(K+\sigma^2 I_n\right)^{-1} K\left(x_0, x\right)^T\right)

Pr [ f ( x 0 ) ∣ Y , X ] ∼ N ( K ( x 0 , x ) ( K + σ 2 I n ) − 1 Y , K ( x 0 , x 0 ) − K ( x 0 , x ) ( K + σ 2 I n ) − 1 K ( x 0 , x ) T )

该方差表明了在测试样本 x 0 x_0 x 0

Marginal likelihood

我们已经推出,Y Y Y

Y ∼ N ( 0 , K γ + σ 2 I n ) Y \sim \mathrm{N}\left(0, K_\gamma+\sigma^2 I_n\right)

Y ∼ N ( 0 , K γ + σ 2 I n )

其中 γ \gamma γ Y Y Y γ \gamma γ

1 ( 2 π ) n / 2 ∣ Σ γ ∣ 1 / 2 exp ( − 1 2 Y T Σ γ − 1 Y ) \frac{1}{(2 \pi)^{n / 2}\left|\Sigma_\gamma\right|^{1 / 2}} \exp \left(-\frac{1}{2} Y^T \Sigma_\gamma^{-1} Y\right)

( 2 π ) n /2 ∣ Σ γ ∣ 1/2 1 exp ( − 2 1 Y T Σ γ − 1 Y )

其中 Σ γ = K γ + σ 2 I n \Sigma_\gamma=K_\gamma+\sigma^2 I_n Σ γ = K γ + σ 2 I n

l = − 1 2 Y T Σ γ − 1 Y − 1 2 log ( ∣ Σ γ ∣ ) − n 2 log ( 2 π ) l=-\frac{1}{2} Y^T \Sigma_\gamma^{-1} Y-\frac{1}{2} \log \left(\left|\Sigma_\gamma\right|\right)-\frac{n}{2} \log (2 \pi)

l = − 2 1 Y T Σ γ − 1 Y − 2 1 log ( ∣ Σ γ ∣ ) − 2 n log ( 2 π )

接着由最大化 l l l γ \gamma γ

Lecture 4: Neural Networks

4.1 Neural networks

4.1.1 Two-layer perceptron

设双层感知机的输出 y i ∈ { 0 , 1 } y_i \in \{0, 1\} y i ∈ { 0 , 1 } h i = ( h i k , k = 1 , … , d ) ⊤ h_i=\left(h_{i k}, k=1, \ldots, d\right)^{\top} h i = ( h ik , k = 1 , … , d ) ⊤ h i k h_{ik} h ik X i = ( x i j , j = 1 , … , p ) ⊤ X_i=\left(x_{i j}, j=1, \ldots, p\right)^{\top} X i = ( x ij , j = 1 , … , p ) ⊤

y i ∼ Bernoulli ( p i ) , p i = sigmoid ( h i ⊤ β ) = sigmoid ( ∑ k = 1 d β k h i k ) , h i k = sigmoid ( X i ⊤ α k ) = sigmoid ( ∑ j = 1 p α k j x i j ) . \begin{aligned}

& y_i \sim \operatorname{Bernoulli}\left(p_i\right), \\